Visual SLAM

Visual simultaneous localization and mapping (vSLAM) is the process of estimating the position and orientation of a camera while simultaneously building a map of its environment using visual inputs. Computer Vision Toolbox™ supports vSLAM workflows for monocular, RGB-D, and stereo cameras, with optional inertial sensor fusion for improved accuracy. These capabilities are essential for applications in robotics, augmented reality, and autonomous navigation. For guidance on choosing a vSLAM workflow, see Choose SLAM Workflow Based on Sensor Data.

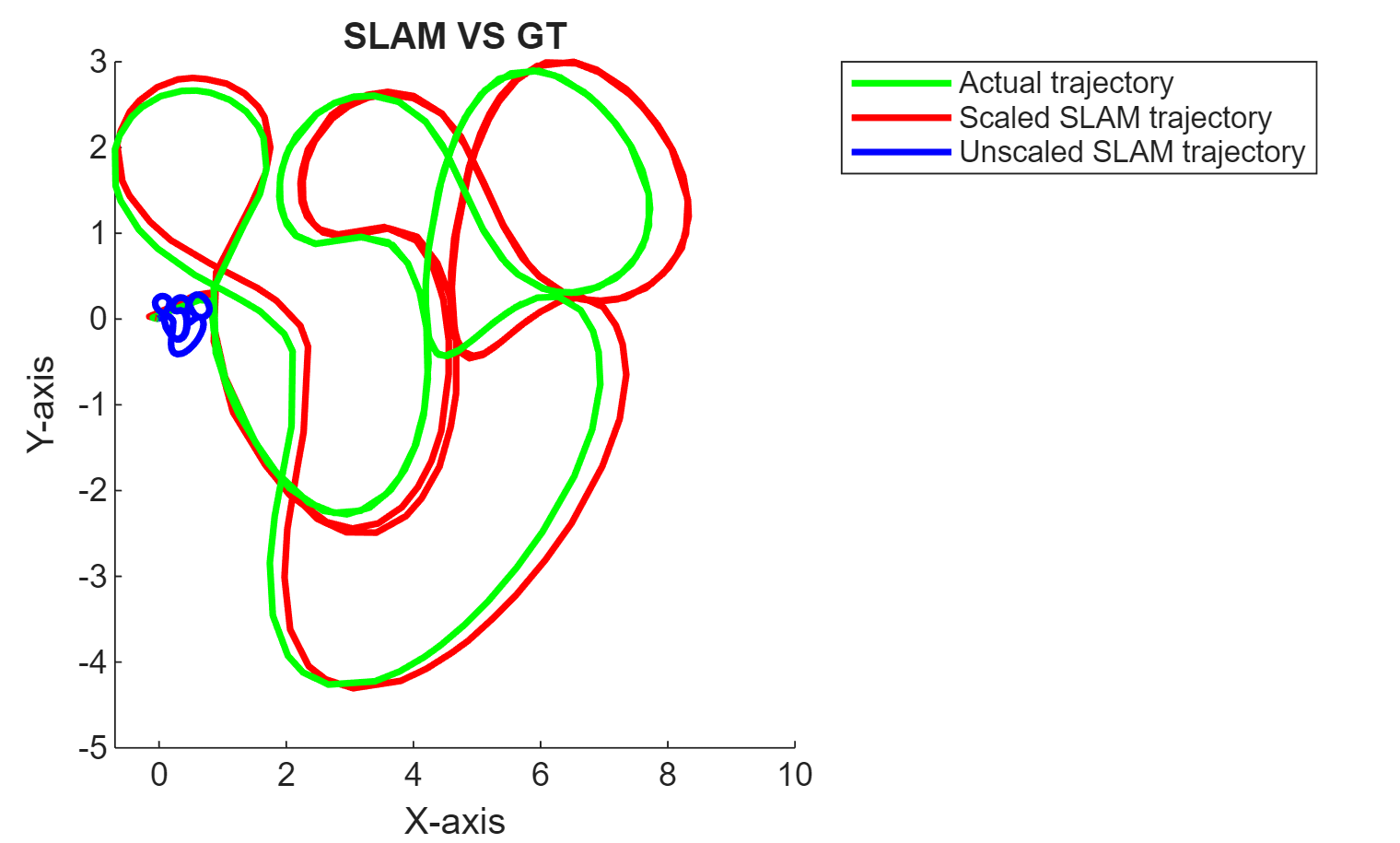

Each visual SLAM object—monovslam, rgbdvslam, and stereovslam—provides ready-to-use tools to add frames, track

keyframes, compute 3-D map points, estimate camera poses, close loops, and

visualize data throughout the camera trajectory. You can also evaluate the

performance of the vSLAM algorithm by comparing the estimated camera trajectory

to the ground truth using the compareTrajectories function. The toolbox also provides

functionality for building your own visual SLAM pipeline.

You can use the toolbox to perform code generation and deployment of vSLAM algorithms. For more information, see Build and Deploy Visual SLAM Algorithm with ROS in MATLAB and Performant and Deployable Monocular Visual SLAM.

Functions

Topics

Ready-To-Use Visual SLAM Functions

- Performant and Deployable Monocular Visual SLAM

Use visual inputs from a camera to perform vSLAM and generate multi-threaded C/C++ code. - Performant Monocular Visual-Inertial SLAM

Use visual inputs from a camera and positional data from an IMU to perform viSLAM in real time. (Since R2025a) - Choose SLAM Workflow Based on Sensor Data

Choose the right simultaneous localization and mapping (SLAM) workflow and find topics, examples, and supported features. - How to Improve Accuracy in Visual SLAM

Tips to improve the accuracy, robustness, and efficiency of your visual SLAM system.

Build Your Own Visual SLAM Pipeline

- Monocular Visual Simultaneous Localization and Mapping

Visual simultaneous localization and mapping (vSLAM). - Monocular Visual-Inertial SLAM

Perform SLAM by combining images captured by a monocular camera with measurements from an IMU sensor. - Stereo Visual Simultaneous Localization and Mapping

Process image data from a stereo camera to build a map of an outdoor environment and estimate the trajectory of the camera.