incrementalLearner

Description

IncrementalMdl = incrementalLearner(Mdl)IncrementalMdl, using the parameters and hyperparameters of the

traditionally trained, Gaussian kernel regression model Mdl. Because

its property values reflect the knowledge gained from Mdl,

IncrementalMdl can predict responses given new observations, and it

is warm, meaning that its predictive performance is tracked.

IncrementalMdl = incrementalLearner(Mdl,Name=Value)IncrementalMdl before its

predictive performance is tracked. For example,

MetricsWarmupPeriod=50,MetricsWindowSize=100 specifies a preliminary

incremental training period of 50 observations before performance metrics are tracked, and

specifies processing 100 observations before updating the window performance metrics.

Examples

Train a kernel regression model by using fitrkernel, and then convert it to an incremental learner.

Load and Preprocess Data

Load the 2015 NYC housing data set. For more details on the data, see NYC Open Data.

load NYCHousing2015Extract the response variable SALEPRICE from the table. For numerical stability, scale SALEPRICE by 1e6.

Y = NYCHousing2015.SALEPRICE/1e6; NYCHousing2015.SALEPRICE = [];

To reduce computational cost for this example, remove the NEIGHBORHOOD column, which contains a categorical variable with 254 categories.

NYCHousing2015.NEIGHBORHOOD = [];

Create dummy variable matrices from the other categorical predictors.

catvars = ["BOROUGH","BUILDINGCLASSCATEGORY"]; dumvarstbl = varfun(@(x)dummyvar(categorical(x)),NYCHousing2015, ... InputVariables=catvars); dumvarmat = table2array(dumvarstbl); NYCHousing2015(:,catvars) = [];

Treat all other numeric variables in the table as predictors of sales price. Concatenate the matrix of dummy variables to the rest of the predictor data.

idxnum = varfun(@isnumeric,NYCHousing2015,OutputFormat="uniform");

X = [dumvarmat NYCHousing2015{:,idxnum}];Train Kernel Regression Model

Fit a kernel regression model to the entire data set.

Mdl = fitrkernel(X,Y)

Mdl =

RegressionKernel

ResponseName: 'Y'

Learner: 'svm'

NumExpansionDimensions: 2048

KernelScale: 1

Lambda: 1.0935e-05

BoxConstraint: 1

Epsilon: 0.0549

Properties, Methods

Mdl is a RegressionKernel model object representing a traditionally trained kernel regression model.

Convert Trained Model

Convert the traditionally trained kernel regression model to a model for incremental learning.

IncrementalMdl = incrementalLearner(Mdl)

IncrementalMdl =

incrementalRegressionKernel

IsWarm: 1

Metrics: [1×2 table]

ResponseTransform: 'none'

NumExpansionDimensions: 2048

KernelScale: 1

Properties, Methods

IncrementalMdl is an incrementalRegressionKernel model object prepared for incremental learning.

The

incrementalLearnerfunction initializes the incremental learner by passing model parameters to it, along with other informationMdlextracted from the training data.IncrementalMdlis warm (IsWarmis1), which means that incremental learning functions can start tracking performance metrics.incrementalRegressionKerneltrains the model using the adaptive scale-invariant solver, whereasfitrkerneltrainedMdlusing the Limited-memory Broyden-Fletcher-Goldfarb-Shanno (LBFGS) solver.

Predict Responses

An incremental learner created from converting a traditionally trained model can generate predictions without further processing.

Predict sales prices for all observations using both models.

ttyfit = predict(Mdl,X); ilyfit = predict(IncrementalMdl,X); compareyfit = norm(ttyfit - ilyfit)

compareyfit = 0

The difference between the fitted values generated by the models is 0.

Use a trained kernel regression model to initialize an incremental learner. Prepare the incremental learner by specifying a metrics warm-up period and a metrics window size.

Load the robot arm data set.

load robotarmFor details on the data set, enter Description at the command line.

Randomly partition the data into 5% and 95% sets: the first set for training a model traditionally, and the second set for incremental learning.

n = numel(ytrain); rng(1) % For reproducibility cvp = cvpartition(n,Holdout=0.95); idxtt = training(cvp); idxil = test(cvp); % 5% set for traditional training Xtt = Xtrain(idxtt,:); Ytt = ytrain(idxtt); % 95% set for incremental learning Xil = Xtrain(idxil,:); Yil = ytrain(idxil);

Fit a kernel regression model to the first set.

TTMdl = fitrkernel(Xtt,Ytt);

Convert the traditionally trained kernel regression model to a model for incremental learning. Specify the following:

A performance metrics warm-up period of 2000 observations.

A metrics window size of 500 observations.

Use of epsilon insensitive loss, MSE, and mean absolute error (MAE) to measure the performance of the model. The software supports epsilon insensitive loss and MSE. Create an anonymous function that measures the absolute error of each new observation. Create a structure array containing the name

MeanAbsoluteErrorand its corresponding function.

maefcn = @(z,zfit)abs(z - zfit); maemetric = struct(MeanAbsoluteError=maefcn); IncrementalMdl = incrementalLearner(TTMdl,MetricsWarmupPeriod=2000,MetricsWindowSize=500, ... Metrics={"epsiloninsensitive","mse",maemetric});

Fit the incremental model to the rest of the data by using the updateMetricsAndFit function. At each iteration:

Simulate a data stream by processing 50 observations at a time.

Overwrite the previous incremental model with a new one fitted to the incoming observations.

Store the cumulative metrics, window metrics, and number of training observations to see how they evolve during incremental learning.

% Preallocation nil = numel(Yil); numObsPerChunk = 50; nchunk = floor(nil/numObsPerChunk); ei = array2table(zeros(nchunk,2),VariableNames=["Cumulative","Window"]); mse = array2table(zeros(nchunk,2),VariableNames=["Cumulative","Window"]); mae = array2table(zeros(nchunk,2),VariableNames=["Cumulative","Window"]); numtrainobs = [IncrementalMdl.NumTrainingObservations; zeros(nchunk+1,1)]; % Incremental fitting for j = 1:nchunk ibegin = min(nil,numObsPerChunk*(j-1) + 1); iend = min(nil,numObsPerChunk*j); idx = ibegin:iend; IncrementalMdl = updateMetricsAndFit(IncrementalMdl,Xil(idx,:),Yil(idx)); ei{j,:} = IncrementalMdl.Metrics{"EpsilonInsensitiveLoss",:}; mse{j,:} = IncrementalMdl.Metrics{"MeanSquaredError",:}; mae{j,:} = IncrementalMdl.Metrics{"MeanAbsoluteError",:}; numtrainobs(j+1) = IncrementalMdl.NumTrainingObservations; end

IncrementalMdl is an incrementalRegressionKernel model object trained on all the data in the stream. During incremental learning and after the model is warmed up, updateMetricsAndFit checks the performance of the model on the incoming observations, and then fits the model to those observations.

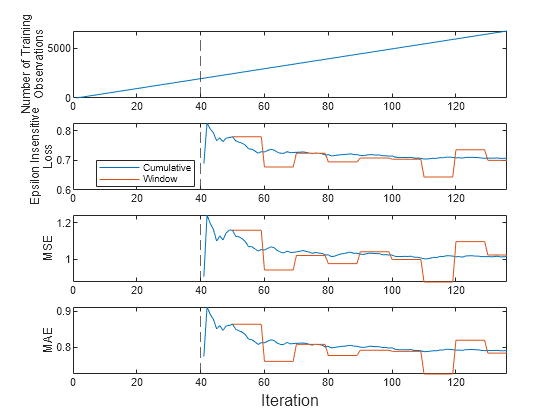

Plot a trace plot of the number of training observations and the performance metrics on separate tiles.

t = tiledlayout(4,1); nexttile plot(numtrainobs) xlim([0 nchunk]) xline(IncrementalMdl.MetricsWarmupPeriod/numObsPerChunk,"--") ylabel(["Number of Training","Observations"]) nexttile plot(ei.Variables) xlim([0 nchunk]) ylabel(["Epsilon Insensitive","Loss"]) xline(IncrementalMdl.MetricsWarmupPeriod/numObsPerChunk,"--") legend(ei.Properties.VariableNames,Location="best") nexttile plot(mse.Variables) xlim([0 nchunk]) ylabel("MSE") xline(IncrementalMdl.MetricsWarmupPeriod/numObsPerChunk,"--") nexttile plot(mae.Variables) xlim([0 nchunk]) ylabel("MAE") xline(IncrementalMdl.MetricsWarmupPeriod/numObsPerChunk,"--") xlabel(t,"Iteration")

The plot suggests that updateMetricsAndFit does the following:

Fit the model during all incremental learning iterations.

Compute the performance metrics after the metrics warm-up period only.

Compute the cumulative metrics during each iteration.

Compute the window metrics after processing 500 observations.

The default solver for incrementalRegressionKernel is the adaptive scale-invariant solver, which does not require hyperparameter tuning before you fit a model. However, if you specify either the standard stochastic gradient descent (SGD) or average SGD (ASGD) solver instead, you can also specify an estimation period, during which the incremental fitting functions tune the learning rate.

Load and shuffle the 2015 NYC housing data set. For more details on the data, see NYC Open Data.

load NYCHousing2015 rng(1) % For reproducibility n = size(NYCHousing2015,1); shuffidx = randsample(n,n); NYCHousing2015 = NYCHousing2015(shuffidx,:);

Extract the response variable SALEPRICE from the table. For numerical stability, scale SALEPRICE by 1e6.

Y = NYCHousing2015.SALEPRICE/1e6; NYCHousing2015.SALEPRICE = [];

To reduce computational cost for this example, remove the NEIGHBORHOOD column, which contains a categorical variable with 254 categories.

NYCHousing2015.NEIGHBORHOOD = [];

Create dummy variable matrices from the categorical predictors.

catvars = ["BOROUGH","BUILDINGCLASSCATEGORY"]; dumvarstbl = varfun(@(x)dummyvar(categorical(x)),NYCHousing2015, ... InputVariables=catvars); dumvarmat = table2array(dumvarstbl); NYCHousing2015(:,catvars) = [];

Treat all other numeric variables in the table as predictors of sales price. Concatenate the matrix of dummy variables to the rest of the predictor data.

idxnum = varfun(@isnumeric,NYCHousing2015,OutputFormat="uniform");

X = [dumvarmat NYCHousing2015{:,idxnum}];Randomly partition the data into 5% and 95% sets: the first set for training a model traditionally, and the second set for incremental learning.

cvp = cvpartition(n,Holdout=0.95); idxtt = training(cvp); idxil = test(cvp); % 5% set for traditional training Xtt = X(idxtt,:); Ytt = Y(idxtt); % 95% set for incremental learning Xil = X(idxil,:); Yil = Y(idxil);

Fit a kernel regression model to 5% of the data.

Mdl = fitrkernel(Xtt,Ytt);

Convert the traditionally trained kernel regression model to a model for incremental learning. Specify the standard SGD solver and an estimation period of 2e4 observations (the default is 1000 when a learning rate is required).

IncrementalMdl = incrementalLearner(Mdl,Solver="sgd",EstimationPeriod=2e4);IncrementalMdl is an incrementalRegressionKernel model object configured for incremental learning.

Fit the incremental model to the rest of the data by using the fit function. At each iteration:

Simulate a data stream by processing 10 observations at a time.

Overwrite the previous incremental model with a new one fitted to the incoming observations.

Store the initial learning rate and number of training observations to see how they evolve during training.

% Preallocation nil = numel(Yil); numObsPerChunk = 10; nchunk = floor(nil/numObsPerChunk); learnrate = [zeros(nchunk,1)]; numtrainobs = [zeros(nchunk,1)]; % Incremental fitting for j = 1:nchunk ibegin = min(nil,numObsPerChunk*(j-1) + 1); iend = min(nil,numObsPerChunk*j); idx = ibegin:iend; IncrementalMdl = fit(IncrementalMdl,Xil(idx,:),Yil(idx)); learnrate(j) = IncrementalMdl.SolverOptions.LearnRate; numtrainobs(j) = IncrementalMdl.NumTrainingObservations; end

IncrementalMdl is an incrementalRegressionKernel model object trained on all the data in the stream.

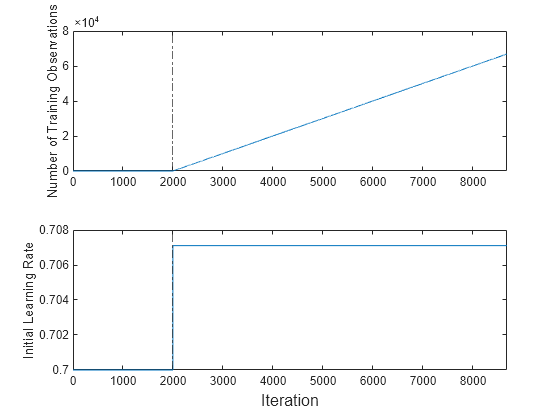

Plot a trace plot of the number of training observations and the initial learning rate on separate tiles.

t = tiledlayout(2,1); nexttile plot(numtrainobs) xlim([0 nchunk]) xline(IncrementalMdl.EstimationPeriod/numObsPerChunk,"-."); ylabel("Number of Training Observations") nexttile plot(learnrate) xlim([0 nchunk]) ylabel("Initial Learning Rate") xline(IncrementalMdl.EstimationPeriod/numObsPerChunk,"-."); xlabel(t,"Iteration")

The plot suggests that fit does not fit the model to the streaming data during the estimation period. The initial learning rate jumps from 0.7 to its autotuned value after the estimation period. During training, the software uses a learning rate that gradually decays from the initial value specified in the LearnRateSchedule property of IncrementalMdl.

Input Arguments

Traditionally trained Gaussian kernel regression model, specified as a RegressionKernel model object returned by fitrkernel.

Note

Incremental learning functions support only numeric input

predictor data. If Mdl was trained on categorical data, you must prepare an

encoded version of the categorical data to use incremental learning functions. Use dummyvar to convert each categorical variable to a numeric matrix of dummy

variables. Then, concatenate all dummy variable matrices and any other numeric predictors, in

the same way that the training function encodes categorical data. For more details, see Dummy Variables.

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Example: Solver="sgd",MetricsWindowSize=100 specifies the stochastic

gradient descent solver for objective optimization, and specifies processing 100

observations before updating the window performance metrics.

General Options

Objective function minimization technique, specified as a value in this table.

| Value | Description | Notes |

|---|---|---|

"scale-invariant" | Adaptive scale-invariant solver for incremental learning [1] |

|

"sgd" | Stochastic gradient descent (SGD) [2][3] |

|

"asgd" | Average stochastic gradient descent (ASGD) [4] |

|

Example: Solver="sgd"

Data Types: char | string

Number of observations processed by the incremental model to estimate hyperparameters before training or tracking performance metrics, specified as a nonnegative integer.

Note

If

Mdlis prepared for incremental learning (all hyperparameters required for training are specified),incrementalLearnerforcesEstimationPeriodto0.If

Mdlis not prepared for incremental learning,incrementalLearnersetsEstimationPeriodto1000.

For more details, see Estimation Period.

Example: EstimationPeriod=100

Data Types: single | double

SGD and ASGD Solver Options

Mini-batch size, specified as a positive integer. At each learning cycle during

training, incrementalLearner uses BatchSize

observations to compute the subgradient.

The number of observations in the last mini-batch (last learning cycle in each

function call of fit or

updateMetricsAndFit) can be smaller than BatchSize.

For example, if you supply 25 observations to fit or

updateMetricsAndFit, the function uses 10 observations for the

first two learning cycles and 5 observations for the last learning cycle.

Example: BatchSize=5

Data Types: single | double

Ridge (L2) regularization term strength, specified as a nonnegative scalar.

Example: Lambda=0.01

Data Types: single | double

Initial learning rate, specified as "auto" or a positive

scalar.

The learning rate controls the optimization step size by scaling the objective

subgradient. LearnRate specifies an initial value for the learning

rate, and LearnRateSchedule

determines the learning rate for subsequent learning cycles.

When you specify "auto":

The initial learning rate is

0.7.If

EstimationPeriod>0,fitandupdateMetricsAndFitchange the rate to1/sqrt(1+max(sum(X.^2,2)))at the end ofEstimationPeriod.

Example: LearnRate=0.001

Data Types: single | double | char | string

Learning rate schedule, specified as a value in this table, where LearnRate specifies the

initial learning rate ɣ0.

| Value | Description |

|---|---|

"constant" | The learning rate is ɣ0 for all learning cycles. |

"decaying" | The learning rate at learning cycle t is

|

Example: LearnRateSchedule="constant"

Data Types: char | string

Adaptive Scale-Invariant Solver Options

Flag for shuffling the observations at each iteration, specified as logical

1 (true) or 0

(false).

| Value | Description |

|---|---|

logical 1 (true) | The software shuffles the observations in an incoming chunk of

data before the fit function fits the model. This

action reduces bias induced by the sampling scheme. |

logical 0 (false) | The software processes the data in the order received. |

Example: Shuffle=false

Data Types: logical

Performance Metrics Options

Model performance metrics to track during incremental learning with updateMetrics or updateMetricsAndFit, specified as a built-in loss function name, string

vector of names, function handle (@metricName), structure array of

function handles, or cell vector of names, function handles, or structure

arrays.

The following table lists the built-in loss function names and which learners,

specified in Mdl.Learner, support them. You can specify more than

one loss function by using a string vector.

| Name | Description | Learner Supporting Metric |

|---|---|---|

"epsiloninsensitive" | Epsilon insensitive loss | 'svm' |

"mse" | Weighted mean squared error | 'svm' and 'leastsquares' |

For more details on the built-in loss functions, see loss.

Example: Metrics=["epsiloninsensitive","mse"]

To specify a custom function that returns a performance metric, use function handle notation. The function must have this form:

metric = customMetric(Y,YFit)

The output argument

metricis an n-by-1 numeric vector, where each element is the loss of the corresponding observation in the data processed by the incremental learning functions during a learning cycle.You specify the function name (

customMetric).Yis a length n numeric vector of observed responses, where n is the sample size.YFitis a length n numeric vector of corresponding predicted responses.

To specify multiple custom metrics and assign a custom name to each, use a structure array. To specify a combination of built-in and custom metrics, use a cell vector.

Example: Metrics=struct(Metric1=@customMetric1,Metric2=@customMetric2)

Example: Metrics={@customMetric1,@customMetric2,"mse",struct(Metric3=@customMetric3)}

updateMetrics and updateMetricsAndFit

store specified metrics in a table in the property

IncrementalMdl.Metrics. The data type of

Metrics determines the row names of the table.

Metrics Value Data Type | Description of Metrics Property Row Name | Example |

|---|---|---|

| String or character vector | Name of corresponding built-in metric | Row name for "epsiloninsensitive" is

"EpsilonInsensitiveLoss" |

| Structure array | Field name | Row name for struct(Metric1=@customMetric1) is

"Metric1" |

| Function handle to function stored in a program file | Name of function | Row name for @customMetric is

"customMetric" |

| Anonymous function | CustomMetric_, where

Metrics | Row name for @(Y,YFit)customMetric(Y,YFit)... is

CustomMetric_1 |

By default:

Metricsis"epsiloninsensitive"ifMdl.Learneris'svm'.Metricsis"mse"ifMdl.Learneris'leastsquares'.

For more details on performance metrics options, see Performance Metrics.

Data Types: char | string | struct | cell | function_handle

Number of observations the incremental model must be fit to before it tracks

performance metrics in its Metrics property, specified as a

nonnegative integer. The incremental model is warm after incremental fitting functions

fit (EstimationPeriod + MetricsWarmupPeriod)

observations to the incremental model.

For more details on performance metrics options, see Performance Metrics.

Example: MetricsWarmupPeriod=50

Data Types: single | double

Number of observations to use to compute window performance metrics, specified as a positive integer.

For more details on performance metrics options, see Performance Metrics.

Example: MetricsWindowSize=250

Data Types: single | double

Output Arguments

Gaussian kernel regression model for incremental learning, returned as an incrementalRegressionKernel model object.

IncrementalMdl is also configured to generate predictions given

new data (see predict).

The incrementalLearner function initializes

IncrementalMdl for incremental learning using the model

information in Mdl. The following table shows the

Mdl properties that incrementalLearner passes to

corresponding properties of IncrementalMdl. The function also passes

other model information required to initialize IncrementalMdl, such

as learned model coefficients and the random number stream.

| Property | Description |

|---|---|

Epsilon | Half the width of the epsilon insensitive band, a nonnegative scalar.

incrementalLearner passes this value only when

Mdl.Learner is 'svm'. |

KernelScale | Kernel scale parameter, a positive scalar |

Learner | Linear regression model type, a character vector |

Mu | Predictor variable means, a numeric vector |

NumExpansionDimensions | Number of dimensions of expanded space, a positive integer |

NumPredictors | Number of predictors, a positive integer |

ResponseTransform | Response transformation function, a function name or function handle |

Sigma | Predictor variable standard deviations, a numeric vector |

More About

Incremental learning, or online learning, is a branch of machine learning concerned with processing incoming data from a data stream, possibly given little to no knowledge of the distribution of the predictor variables, aspects of the prediction or objective function (including tuning parameter values), or whether the observations are labeled. Incremental learning differs from traditional machine learning, where enough labeled data is available to fit to a model, perform cross-validation to tune hyperparameters, and infer the predictor distribution.

Given incoming observations, an incremental learning model processes data in any of the following ways, but usually in this order:

Predict labels.

Measure the predictive performance.

Check for structural breaks or drift in the model.

Fit the model to the incoming observations.

For more details, see Incremental Learning Overview.

The adaptive scale-invariant solver for incremental learning, introduced in [1], is a gradient-descent-based objective solver for training linear predictive models. The solver is hyperparameter free, insensitive to differences in predictor variable scales, and does not require prior knowledge of the distribution of the predictor variables. These characteristics make it well suited to incremental learning.

The standard SGD and ASGD solvers are sensitive to differing scales among the predictor variables, resulting in models that can perform poorly. To achieve better accuracy using SGD and ASGD, you can standardize the predictor data, and tune the regularization and learning rate parameters. For traditional machine learning, enough data is available to enable hyperparameter tuning by cross-validation and predictor standardization. However, for incremental learning, enough data might not be available (for example, observations might be available only one at a time) and the distribution of the predictors might be unknown. These characteristics make parameter tuning and predictor standardization difficult or impossible to do during incremental learning.

The incremental fitting functions fit and

updateMetricsAndFit use the more aggressive ScInOL2 version of the

algorithm.

Random feature expansion, such as Random Kitchen Sinks [1] or Fastfood [2], is a scheme to approximate Gaussian kernels of the kernel regression algorithm for big data in a computationally efficient way. Random feature expansion is more practical for big data applications that have large training sets, but can also be applied to smaller data sets that fit in memory.

After mapping the predictor data into a high-dimensional space, the kernel regression algorithm searches for an optimal function that deviates from each response data point (yi) by values no greater than the epsilon margin (ε).

Some regression problems cannot be described adequately using a linear model. In such cases, obtain a nonlinear regression model by replacing the dot product x1x2′ with a nonlinear kernel function , where xi is the ith observation (row vector) and φ(xi) is a transformation that maps xi to a high-dimensional space (called the “kernel trick”). However, evaluating G(x1,x2), the Gram matrix, for each pair of observations is computationally expensive for a large data set (large n).

The random feature expansion scheme finds a random transformation so that its dot product approximates the Gaussian kernel. That is,

where T(x) maps x in to a high-dimensional space (). The Random Kitchen Sinks [1] scheme uses the random transformation

where is a sample drawn from and σ is a kernel scale. This scheme requires O(mp) computation and storage. The Fastfood [2] scheme introduces

another random basis V instead of Z using Hadamard

matrices combined with Gaussian scaling matrices. This random basis reduces computation cost

to O(mlogp) and reduces storage to O(m).

incrementalRegressionKernel uses the Fastfood

scheme for random feature expansion, and uses linear regression to train a Gaussian kernel

regression model. You can specify values for m and σ using

the NumExpansionDimensions and KernelScale name-value

arguments, respectively, when you create a traditionally trained model using fitrkernel or when

you call incrementalRegressionKernel directly to create the model

object.

Algorithms

During the estimation period, the incremental fitting functions fit and updateMetricsAndFit use the first incoming EstimationPeriod observations

to estimate (tune) hyperparameters required for incremental training. Estimation occurs only

when EstimationPeriod is positive. This table describes the

hyperparameters and when they are estimated, or tuned.

| Hyperparameter | Model Property | Usage | Conditions |

|---|---|---|---|

| Predictor means and standard deviations |

| Standardize predictor data | The hyperparameters are not estimated. |

| Learning rate | LearnRate field of SolverOptions | Adjust the solver step size | The hyperparameter is estimated when both of these conditions apply:

|

| Half the width of the epsilon insensitive band | Epsilon

| Control the number of support vectors | The software does not estimate If you create an

|

| Kernel scale parameter | KernelScale | Set a kernel scale parameter value for random feature expansion |

During the estimation period, fit does not fit the model, and updateMetricsAndFit does not fit the model or update the performance metrics. At the end of the estimation period, the functions update the properties that store the hyperparameters.

If incremental learning functions are configured to standardize predictor variables,

they do so using the means and standard deviations stored in the Mu and

Sigma properties, respectively, of the incremental learning model

IncrementalMdl.

If you standardize the predictor data when you train the input model

Mdlby usingfitrkernel, the following conditions apply:incrementalLearnerpasses the means inMdl.Muand standard deviations inMdl.Sigmato the corresponding incremental learning model properties.Incremental learning functions always standardize the predictor data.

When incremental fitting functions estimate predictor means and standard deviations, the functions compute weighted means and weighted standard deviations using the estimation period observations. Specifically, the functions standardize predictor j (xj) using

xj is predictor j, and xjk is observation k of predictor j in the estimation period.

wj is observation weight j.

The

updateMetricsandupdateMetricsAndFitfunctions are incremental learning functions that track model performance metrics (Metrics) from new data only when the incremental model is warm (IsWarmproperty istrue). An incremental model becomes warm afterfitorupdateMetricsAndFitfits the incremental model toMetricsWarmupPeriodobservations, which is the metrics warm-up period.If

EstimationPeriod> 0, thefitandupdateMetricsAndFitfunctions estimate hyperparameters before fitting the model to data. Therefore, the functions must process an additionalEstimationPeriodobservations before the model starts the metrics warm-up period.The

Metricsproperty of the incremental model stores two forms of each performance metric as variables (columns) of a table,CumulativeandWindow, with individual metrics in rows. When the incremental model is warm,updateMetricsandupdateMetricsAndFitupdate the metrics at the following frequencies:Cumulative— The functions compute cumulative metrics since the start of model performance tracking. The functions update metrics every time you call the functions and base the calculation on the entire supplied data set.Window— The functions compute metrics based on all observations within a window determined byMetricsWindowSize, which also determines the frequency at which the software updatesWindowmetrics. For example, ifMetricsWindowSizeis 20, the functions compute metrics based on the last 20 observations in the supplied data (X((end – 20 + 1):end,:)andY((end – 20 + 1):end)).Incremental functions that track performance metrics within a window use the following process:

Store a buffer of length

MetricsWindowSizefor each specified metric, and store a buffer of observation weights.Populate elements of the metrics buffer with the model performance based on batches of incoming observations, and store corresponding observation weights in the weights buffer.

When the buffer is full, overwrite the

Windowfield of theMetricsproperty with the weighted average performance in the metrics window. If the buffer overfills when the function processes a batch of observations, the latest incomingMetricsWindowSizeobservations enter the buffer, and the earliest observations are removed from the buffer. For example, supposeMetricsWindowSizeis 20, the metrics buffer has 10 values from a previously processed batch, and 15 values are incoming. To compose the length 20 window, the functions use the measurements from the 15 incoming observations and the latest 5 measurements from the previous batch.

The software omits an observation with a

NaNprediction when computing theCumulativeandWindowperformance metric values.

References

[1] Kempka, Michał, Wojciech Kotłowski, and Manfred K. Warmuth. "Adaptive Scale-Invariant Online Algorithms for Learning Linear Models." Preprint, submitted February 10, 2019. https://arxiv.org/abs/1902.07528.

[2] Langford, J., L. Li, and T. Zhang. “Sparse Online Learning Via Truncated Gradient.” J. Mach. Learn. Res., Vol. 10, 2009, pp. 777–801.

[3] Shalev-Shwartz, S., Y. Singer, and N. Srebro. “Pegasos: Primal Estimated Sub-Gradient Solver for SVM.” Proceedings of the 24th International Conference on Machine Learning, ICML ’07, 2007, pp. 807–814.

[4] Xu, Wei. “Towards Optimal One Pass Large Scale Learning with Averaged Stochastic Gradient Descent.” CoRR, abs/1107.2490, 2011.

[5] Rahimi, A., and B. Recht. “Random Features for Large-Scale Kernel Machines.” Advances in Neural Information Processing Systems. Vol. 20, 2008, pp. 1177–1184.

[6] Le, Q., T. Sarlós, and A. Smola. “Fastfood — Approximating Kernel Expansions in Loglinear Time.” Proceedings of the 30th International Conference on Machine Learning. Vol. 28, No. 3, 2013, pp. 244–252.

[7] Huang, P. S., H. Avron, T. N. Sainath, V. Sindhwani, and B. Ramabhadran. “Kernel methods match Deep Neural Networks on TIMIT.” 2014 IEEE International Conference on Acoustics, Speech and Signal Processing. 2014, pp. 205–209.

Version History

Introduced in R2022a

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

Americas

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)