predict

Predict responses for new observations from kernel incremental learning model

Since R2022a

Description

Examples

Create an incremental learning model by converting a traditionally trained kernel model, and predict responses using both models.

Load the 2015 NYC housing data set. For more details on the data, see NYC Open Data.

load NYCHousing2015Extract the response variable SALEPRICE from the table. For numerical stability, scale SALEPRICE by 1e6.

Y = NYCHousing2015.SALEPRICE/1e6; NYCHousing2015.SALEPRICE = [];

To reduce computational cost for this example, remove the NEIGHBORHOOD column, which contains a categorical variable with 254 categories.

NYCHousing2015.NEIGHBORHOOD = [];

Create dummy variable matrices from the other categorical predictors.

catvars = ["BOROUGH","BUILDINGCLASSCATEGORY"]; dumvarstbl = varfun(@(x)dummyvar(categorical(x)),NYCHousing2015, ... InputVariables=catvars); dumvarmat = table2array(dumvarstbl); NYCHousing2015(:,catvars) = [];

Treat all other numeric variables in the table as predictors of sales price. Concatenate the matrix of dummy variables to the rest of the predictor data.

idxnum = varfun(@isnumeric,NYCHousing2015,OutputFormat="uniform");

X = [dumvarmat NYCHousing2015{:,idxnum}];Fit a kernel regression model to the entire data set.

Mdl = fitrkernel(X,Y)

Mdl =

RegressionKernel

ResponseName: 'Y'

Learner: 'svm'

NumExpansionDimensions: 2048

KernelScale: 1

Lambda: 1.0935e-05

BoxConstraint: 1

Epsilon: 0.0549

Properties, Methods

Mdl is a RegressionKernel model object representing a traditionally trained kernel regression model.

Convert the traditionally trained kernel regression model to a model for incremental learning.

IncrementalMdl = incrementalLearner(Mdl)

IncrementalMdl =

incrementalRegressionKernel

IsWarm: 1

Metrics: [1×2 table]

ResponseTransform: 'none'

NumExpansionDimensions: 2048

KernelScale: 1

Properties, Methods

IncrementalMdl is an incrementalRegressionKernel model object prepared for incremental learning.

The incrementalLearner function initializes the incremental learner by passing model parameters to it, along with other information Mdl extracted from the training data. IncrementalMdl is warm (IsWarm is 1), which means that incremental learning functions can start tracking performance metrics.

An incremental learner created from converting a traditionally trained model can generate predictions without further processing.

Predict sales prices for all observations using both models.

ttyfit = predict(Mdl,X); ilyfit = predict(IncrementalMdl,X); compareyfit = norm(ttyfit - ilyfit)

compareyfit = 0

The difference between the fitted values generated by the models is 0.

To compute posterior class probabilities, specify a logistic regression incremental learner.

Load the human activity data set. Randomly shuffle the data.

load humanactivity n = numel(actid); rng(10) % For reproducibility idx = randsample(n,n); X = feat(idx,:); Y = actid(idx);

For details on the data set, enter Description at the command line.

Responses can be one of five classes: Sitting, Standing, Walking, Running, or Dancing. Dichotomize the response by identifying whether the subject is moving (actid > 2).

Y = Y > 2;

Create an incremental logistic regression model for binary classification. Prepare it for predict by fitting the model to the first 10 observations.

Mdl = incrementalClassificationKernel(Learner="logistic");

initobs = 10;

Mdl = fit(Mdl,X(1:initobs,:),Y(1:initobs));Mdl is an incrementalClassificationKernel model. All its properties are read-only.

Simulate a data stream, and perform the following actions on each incoming chunk of 50 observations:

Call

predictto predict classification scores for the observations in the incoming chunk of data. The classification scores are posterior class probabilities for logistic regression learners.Call

rocmetricsto compute the area under the ROC curve (AUC) using the classification scores, and store the result.Call

fitto fit the model to the incoming chunk. Overwrite the previous incremental model with a new one fitted to the incoming observations.

numObsPerChunk = 50; nchunk = floor((n - initobs)/numObsPerChunk); auc = zeros(nchunk,1); % Incremental learning for j = 1:nchunk ibegin = min(n,numObsPerChunk*(j-1) + 1 + initobs); iend = min(n,numObsPerChunk*j + initobs); idx = ibegin:iend; [~,posteriorProb] = predict(Mdl,X(idx,:)); mdlROC = rocmetrics(Y(idx),posteriorProb,Mdl.ClassNames); auc(j) = mdlROC.AUC(2); Mdl = fit(Mdl,X(idx,:),Y(idx)); end

Mdl is an incrementalClassificationKernel model object trained on all the data in the stream.

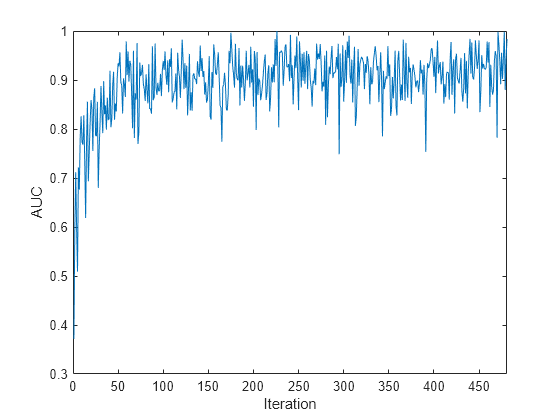

Plot the AUC for the incoming chunks of data.

plot(auc) xlim([0 nchunk]) ylabel("AUC") xlabel("Iteration")

The plot suggests that the classifier predicts moving subjects well during incremental learning.

Input Arguments

Incremental learning model, specified as an incrementalClassificationKernel or incrementalRegressionKernel model object. You can create Mdl directly or by converting a supported, traditionally trained machine learning model using the incrementalLearner function. For more details, see the corresponding reference page.

You must configure Mdl to predict labels for a batch of observations.

If

Mdlis a converted, traditionally trained model, you can predict labels without any modifications.Otherwise, you must fit

Mdlto data usingfitorupdateMetricsAndFit.

Batch of predictor data, specified as a floating-point matrix of

n observations and Mdl.NumPredictors predictor

variables.

Note

predict supports only floating-point

input predictor data. If your input data includes categorical data, you must prepare an encoded

version of the categorical data. Use dummyvar to convert each categorical variable

to a numeric matrix of dummy variables. Then, concatenate all dummy variable matrices and any

other numeric predictors. For more details, see Dummy Variables.

Data Types: single | double

Output Arguments

Predicted responses (labels), returned as a categorical or character array;

floating-point, logical, or string vector; or cell array of character vectors with

n rows. n is the number of observations in

X, and label(

is the predicted response for observation

j)j

For regression problems,

labelis a floating-point vector.For classification problems,

labelhas the same data type as the class names stored inMdl.ClassNames. (The software treats string arrays as cell arrays of character vectors.)The

predictfunction classifies an observation into the class yielding the highest score. For an observation withNaNscores, the function classifies the observation into the majority class, which makes up the largest proportion of the training labels.

Classification scores, returned as an n-by-2 floating-point

matrix when Mdl is an

incrementalClassificationKernel model. n is the

number of observations in X.

score(

is the score for classifying observation j,k)jkMdl.ClassNames specifies the order of the classes.

If Mdl.Learner is 'svm',

predict returns raw classification scores. If

Mdl.Learner is 'logistic', classification scores

are posterior probabilities.

More About

For kernel incremental learning models for binary classification, the

raw classification score for classifying the observation

x, a row vector, into the positive class (second class in

Mdl.ClassNames) is

where

is a transformation of an observation for feature expansion.

β0 is the scalar bias.

β is the column vector of coefficients.

The raw classification score for classifying x into the negative

class (first class in Mdl.ClassNames) is

–f(x). The software classifies observations into the

class that yields the positive score.

If the kernel classification model consists of logistic regression learners, then the

software applies the "logit" score transformation to the raw

classification scores.

Extended Capabilities

Usage notes and limitations:

Use

saveLearnerForCoder,loadLearnerForCoder, andcodegen(MATLAB Coder) to generate code for thepredictfunction. Save a trained model by usingsaveLearnerForCoder. Define an entry-point function that loads the saved model by usingloadLearnerForCoderand calls thepredictfunction. Then usecodegento generate code for the entry-point function.To generate single-precision C/C++ code for

predict, specifyDataType="single"when you call theloadLearnerForCoderfunction.This table contains notes about the arguments of

predict. Arguments not included in this table are fully supported.Argument Notes and Limitations MdlFor usage notes and limitations of the model object, see

incrementalClassificationKernelorincrementalRegressionKernel.XBatch-to-batch, the number of observations can be a variable size.

The number of predictor variables must equal

Mdl.NumPredictors.Xmust besingleordouble.

The following restrictions apply:

If you configure

Mdlto shuffle data (Mdl.Shuffleistrue, orMdl.Solveris"sgd"or"asgd"), thepredictfunction randomly shuffles each incoming batch of observations before it fits the model to the batch. The order of the shuffled observations might not match the order generated by MATLAB®. Therefore, if you fitMdlbefore generating predictions, the predictions computed in MATLAB might not be equal to the predictions computed by the generated code.Use a homogeneous data type (specifically,

singleordouble) for all floating-point input arguments and object properties.

For more information, see Introduction to Code Generation for Statistics and Machine Learning Functions.

Version History

Introduced in R2022aYou can generate C/C++ code for the predict function.

See Also

Objects

Functions

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

Americas

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)