rlTRPOAgent

Description

Trust region policy optimization (TRPO) is an on-policy, policy gradient reinforcement learning method for environments with a discrete or continuous action space. It directly estimates a stochastic policy and uses a value function critic to estimate the value of the policy. This algorithm prevents significant performance drops compared to standard policy gradient methods by keeping the updated policy within a trust region close to the current policy. The action space can be either discrete or continuous. For continuous action spaces, this agent does not enforce constraints set in the action specification; therefore, if you need to enforce action constraints, you must do so within the environment.

Note

For TRPO agents, you can only use actors or critics with deep network that support calculating higher order derivatives. Actors and critics that use recurrent networks, custom basis functions, or tables are not supported. To implement a table using a neural network, see Use Custom Layer in TRPO Agent to Solve Tabular Approximation Problem.

For more information on TRPO agents, see Trust Region Policy Optimization (TRPO) Agent. For more information on the different types of reinforcement learning agents, see Reinforcement Learning Agents.

Creation

Syntax

Description

Create Agent from Observation and Action Specifications

agent = rlTRPOAgent(observationInfo,actionInfo)observationInfo and the action

specification actionInfo. The ObservationInfo

and ActionInfo properties of agent are set to

the observationInfo and actionInfo input

arguments, respectively.

agent = rlTRPOAgent(observationInfo,actionInfo,initOpts)initOpts object. TRPO agents do not support recurrent neural

networks. For more information on the initialization options, see rlAgentInitializationOptions.

Create Agent from Actor and Critic

Specify Agent Options

agent = rlTRPOAgent(___,agentOptions)AgentOptions property to the agentOptions input

argument. Use this syntax after any of the input arguments in the previous

syntaxes.

Input Arguments

Properties

Object Functions

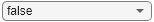

train | Train reinforcement learning agents within a specified environment |

sim | Simulate trained reinforcement learning agents within specified environment |

getAction | Obtain action from agent, actor, or policy object given environment observations |

getActor | Extract actor from reinforcement learning agent |

setActor | Set actor of reinforcement learning agent |

getCritic | Extract critic from reinforcement learning agent |

setCritic | Set critic of reinforcement learning agent |

generatePolicyFunction | Generate MATLAB function that evaluates policy of an agent or policy object |

Examples

Tips

The default agent for continuous action spaces already enforces constraints set by the action specification for greedy actions. In other words, the default actor network is such that the mean value of exploratory (that is, non-greedy) actions is always within the limits set by the action specifications. On the other hand, constraints on exploratory actions are not enforced.

For continuous action spaces and with custom networks, this agent does not automatically enforce the constraints set by the action specification. In this case, you must enforce action space constraints within the environment.

While tuning the learning rate of the actor network is necessary for PPO agents, it is not necessary for TRPO agents.

For high-dimensional observations, such as images, PPO, SAC, or TD3 agents are recommended instead of TRPO.

Version History

Introduced in R2021bSee Also

Apps

Functions

getAction|getActor|getCritic|getModel|generatePolicyFunction|generatePolicyBlock|getActionInfo|getObservationInfo

Objects

rlTRPOAgentOptions|rlAgentInitializationOptions|rlValueFunction|rlDiscreteCategoricalActor|rlContinuousGaussianActor|rlACAgent|rlPGAgent|rlPPOAgent