Train Default DDPG Agent to Swing Up and Balance Continuous Pendulum

This example shows how to train a deep deterministic policy gradient (DDPG) agent to swing up and balance a pendulum modeled in Simulink®.

For more information on DDPG agents, see Deep Deterministic Policy Gradient (DDPG) Agent. For an example that shows how to train a DDPG agent in a MATLAB® environment, see Compare DDPG Agent to LQR Controller.

Fix Random Number Stream for Reproducibility

The example code might involve computation of random numbers at several stages. Fixing the random number stream at the beginning of some sections in the example code preserves the random number sequence in the section every time you run it, which increases the likelihood of reproducing the results. For more information, see Results Reproducibility.

Fix the random number stream with seed 0 and random number algorithm Mersenne Twister. For more information on controlling the seed used for random number generation, see rng.

previousRngState = rng(0,"twister");The output previousRngState is a structure that contains information about the previous state of the stream. You will restore the state at the end of the example.

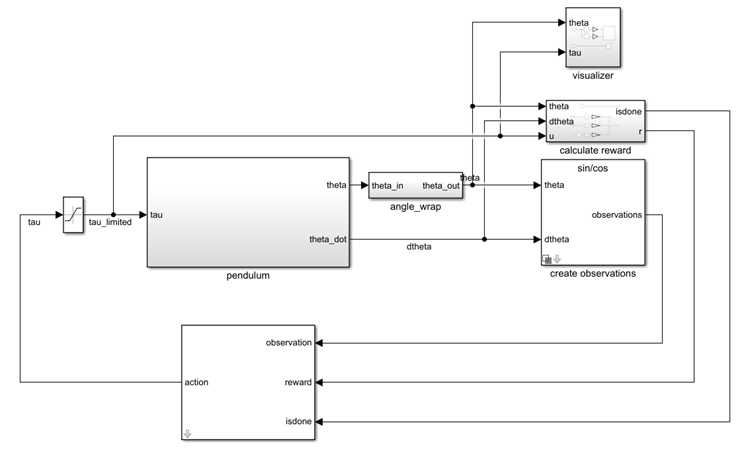

Pendulum Swing-Up Environment Model

The reinforcement learning environment for this example is a simple frictionless pendulum that initially hangs in a downward position. The training goal is to make the pendulum stand upright using minimal control effort.

Open the model.

mdl = "rlSimplePendulumModel";

open_system(mdl)

In this model:

The balanced, upright pendulum position is zero radians, and the downward hanging pendulum position is

piradians.The torque action signal from the agent to the environment is from –2 to 2 N·m.

The observations from the environment are the sine of the pendulum angle, the cosine of the pendulum angle, and the pendulum angle derivative.

The reward , provided at every time step, is

Here:

is the angle of displacement from the upright position.

is the derivative of the displacement angle.

is the control effort from the previous time step.

For more information on this model, see Use Predefined Control System Environments.

Create Environment Object

Create a predefined environment object for the pendulum.

env = rlPredefinedEnv("SimplePendulumModel-Continuous")env =

SimulinkEnvWithAgent with properties:

Model : rlSimplePendulumModel

AgentBlock : rlSimplePendulumModel/RL Agent

ResetFcn : []

UseFastRestart : on

obsInfo = getObservationInfo(env);

The object has a continuous action space where the agent can apply torque values between –2 to 2 N·m to the pendulum.

actInfo = getActionInfo(env)

actInfo =

rlNumericSpec with properties:

LowerLimit: -2

UpperLimit: 2

Name: "torque"

Description: [0×0 string]

Dimension: [1 1]

DataType: "double"

Set the observations of the environment to be the sine of the pendulum angle, the cosine of the pendulum angle, and the pendulum angle derivative.

set_param( ... "rlSimplePendulumModel/create observations", ... "ThetaObservationHandling","sincos");

To define the initial condition of the pendulum as hanging downward, specify an environment reset function using an anonymous function handle. This reset function uses the setVariable (Simulink) function to set the model workspace variable theta0 to pi. For more information, see Reset Function for Simulink Environments.

env.ResetFcn = @(in)setVariable(in,"theta0",pi,"Workspace",mdl);

Specify the agent sample time Ts and the simulation time Tf in seconds.

Ts = 0.05; Tf = 20;

Create Default DDPG Agent

When you create the agent, the actor and critic networks are initialized randomly. Ensure reproducibility of the section by fixing the seed used for random number generation.

rng(0,"twister")Create a default rlDDPGAgent object using the environment specification objects.

agent = rlDDPGAgent(obsInfo,actInfo);

To ensure that the RL Agent block in the environment executes every Ts seconds instead of the default setting of one second, set the SampleTime property of the agent.

agent.AgentOptions.SampleTime = Ts;

Set a lower learning rate and a lower gradient threshold to promote a smoother (though possibly slower) training.

agent.AgentOptions.CriticOptimizerOptions.LearnRate = 1e-3; agent.AgentOptions.ActorOptimizerOptions.LearnRate = 1e-3; agent.AgentOptions.CriticOptimizerOptions.GradientThreshold = 1; agent.AgentOptions.ActorOptimizerOptions.GradientThreshold = 1;

Increase the length of the experience buffer and the size of the mini buffer.

agent.AgentOptions.ExperienceBufferLength = 1e5; agent.AgentOptions.MiniBatchSize = 128;

Train Agent

To train the agent, first specify the training options. For this example, use the following options.

Run training for a maximum of 5000 episodes, with each episode lasting a maximum of

ceil(Tf/Ts)time steps.Display the training progress in the Reinforcement Learning Training Monitor dialog box (set the

Plotsoption) and disable the command line display (set theVerboseoption tofalse).Stop the training when the agent receives an average cumulative reward greater than –740 when evaluating the deterministic policy. At this point, the agent can quickly balance the pendulum in the upright position using minimal control effort.

Save a copy of the agent for each episode where the cumulative reward is greater than –740.

For more information on training options, see rlTrainingOptions.

maxepisodes = 5000; maxsteps = ceil(Tf/Ts); trainOpts = rlTrainingOptions( ... MaxEpisodes=maxepisodes, ... MaxStepsPerEpisode=maxsteps, ... ScoreAveragingWindowLength=5, ... Verbose=false, ... Plots="training-progress", ... StopTrainingCriteria="EvaluationStatistic", ... StopTrainingValue=-740, ... SaveAgentCriteria="EvaluationStatistic", ... SaveAgentValue=-740);

Train the agent using the train function. Training this agent is a computationally intensive process that takes several hours to complete. To save time while running this example, load a pretrained agent by setting doTraining to false. To train the agent yourself, set doTraining to true.

doTraining =false; if doTraining % Use the rlEvaluator object to measure policy performance every 10 % episodes evaluator = rlEvaluator( ... NumEpisodes=1, ... EvaluationFrequency=10); % Train the agent. trainingResults = train(agent,env,trainOpts,Evaluator=evaluator); else % Load the pretrained agent for the example. load("SimulinkPendulumDDPG.mat","agent") end

Simulate DDPG Agent

Fix the random stream for reproducibility.

rng(0,"twister");To validate the performance of the trained agent, simulate it within the pendulum environment. For more information on agent simulation, see rlSimulationOptions and sim.

simOptions = rlSimulationOptions(MaxSteps=500); experience = sim(env,agent,simOptions);

totalReward = sum(experience.Reward)

totalReward = -731.2350

The simulation shows that the agent stabilizes the pendulum in the vertical position.

Restore the random number stream using the information stored in previousRngState.

rng(previousRngState);

See Also

Apps

Functions

train|sim|rlSimulinkEnv

Objects

rlDDPGAgent|rlDDPGAgentOptions|rlQValueFunction|rlContinuousDeterministicActor|rlTrainingOptions|rlSimulationOptions|rlOptimizerOptions

Blocks

Blocks

Topics

- Train Default DQN Agent to Swing Up and Balance Discrete Pendulum

- Train Default DDPG Agent to Swing Up and Balance Continuous Cart-Pole

- Train DDPG Agent to Swing Up and Balance Pendulum with Bus Signal

- Train DDPG Agent with Custom Networks Using Image Observation

- Create Custom Simulink Environments

- Deep Deterministic Policy Gradient (DDPG) Agent

- Train Reinforcement Learning Agents