detect

Syntax

Description

bboxes = detect(detector,I)I, using

a you only look once version 4 (YOLO v4) object detector, detector.

The detect function automatically resizes and rescales the input image

to match that of the images used for training the detector. The locations of objects

detected in the input image are returned as a set of bounding boxes.

Note

To use the pretrained YOLO v4 object detection networks trained on COCO dataset, you must install the Computer Vision Toolbox™ Model for YOLO v4 Object Detection. You can download and install the Computer Vision Toolbox Model for YOLO v4 Object Detection from Add-On Explorer. For more information about installing add-ons, see Get and Manage Add-Ons. To run this function, you will require the Deep Learning Toolbox™.

detectionResults = detect(detector,ds)read function of the input

datastore ds.

[___] = detect(___,

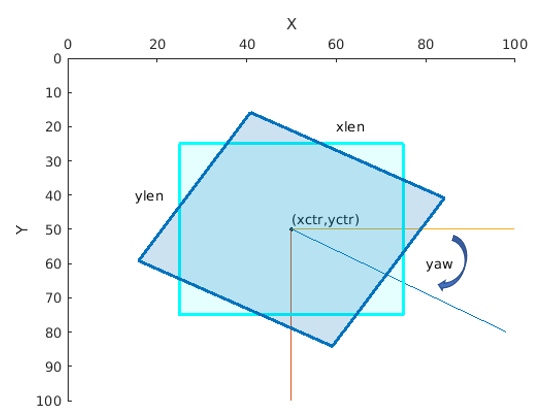

detects objects within the rectangular search region roi)roi, in addition

to any combination of arguments from previous syntaxes.

[___] = detect(___,

specifies options using one or more name-value arguments, in addition to any combination

of arguments from previous syntaxes..Name=Value)