importNetworkFromPyTorch

Description

Add-On Required: This feature requires the Deep Learning Toolbox Converter for PyTorch Models add-on.

net = importNetworkFromPyTorch(modelfile)modelfile. The PyTorch model must be an exported program or a traced model. Exported program models

are recommended as they result in models with more Deep Learning Toolbox™ built-in layers.

Try exporting your model using PyTorch version 2.8 to prepare it for import. If the model cannot be exported, then trace it. See Tips for more information. The following code outlines the steps needed to prepare a PyTorch model for import:

# Ensure the layers are set to inference mode.

model.eval()

# Move the model to the CPU.

model.to("cpu")

# Generate input data.

X = torch.rand(1,3,224,224)

# For ExportedProgram models

# Export the model and save it in PyTorch version 2.8.

exported_model = torch.export.export(model, (X,))

torch.export.save(exported_model, 'myModel.pt2')

# For traced models

# Trace the model and save it.

traced_model = torch.jit.trace(model.forward, X)

traced_model.save('myModel.pt')Alternatively, import PyTorch models interactively using the Deep Network Designer. On import, the app shows an import report with details about any issues that require attention. For more information, see Import Network from External Platform.

net = importNetworkFromPyTorch(modelfile,Name=Value)PyTorchInputSizes=[1 3 224 244]

may return more built-in Deep Learning Toolbox layers.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

Limitations

The

importNetworkFromPyTorchfunction supports exported networks created in PyTorch version 2.8. The function may be able to support traced networks created in other versions of PyTorch.Import of

ExportedProgramformat models exported with dynamic shapes is not supported.

More About

Tips

It is recommended to prepare PyTorch models for import by exporting them using

torch.export.export()over tracing. TheExportedProgramformat provides a stable, framework-independent file that captures the model’s computation graph, input and output specifications, and parameters in a deterministic structure. This format allows the software to import models that are initialized and comprising of more built-in MATLAB layers.Specify

PyTorchInputSizeswhen importing traced models to get better support for importing PyTorch layers into built-in MATLAB layers.To use a pretrained network for prediction or transfer learning on new images, you must preprocess your images in the same way as the images that you use to train the imported model. The most common preprocessing steps are resizing images, subtracting image average values, and converting the images from BGR format to RGB format.

For more information about preprocessing images for training and prediction, see Preprocess Images for Deep Learning.

The members of the

+namespace are not accessible if the namespace parent folder is not on the MATLAB path. For more information, see Namespaces and the MATLAB Path.NamespaceMATLAB uses one-based indexing, whereas Python uses zero-based indexing. In other words, the first element in an array has an index of 1 and 0 in MATLAB and Python, respectively. For more information about MATLAB indexing, see Array Indexing. In MATLAB, to use an array of indices (

ind) created in Python, convert the array toind+1.For more tips, see Tips on Importing Models from TensorFlow, PyTorch, and ONNX.

Algorithms

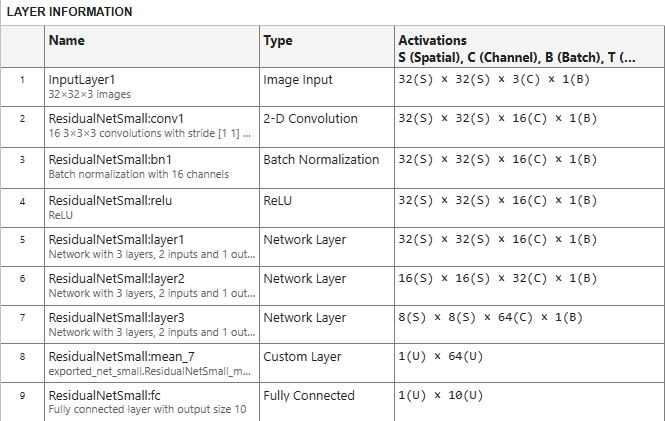

The importNetworkFromPyTorch function imports a PyTorch layer into MATLAB by trying these steps in order:

The function tries to import the PyTorch layer as a built-in MATLAB layer. For more information, see Conversion of PyTorch Layers.

The function tries to import the PyTorch layer as a built-in MATLAB function. For more information, see Conversion of PyTorch Layers.

The function tries to import the PyTorch layer as a custom layer.

importNetworkFromPyTorchsaves the generated custom layers and the associated functions in the+namespace.NamespaceThe function imports the PyTorch layer as a custom layer with a placeholder function. You must complete the placeholder function before you can use the network, see Placeholder Functions.

In the first three cases, the imported network is ready for prediction after you initialize it.

Alternative Functionality

App

You can also import networks from external platforms by using the Deep Network Designer app. The app uses the

importNetworkFromPyTorch function to import the network, and displays a progress

dialog box. During the import process, the app adds an input layer to the network, if

possible, and displays an import report with details about any issues that require

attention. After importing a network, you can interactively edit, visualize, and analyze the

network. When you are finished editing the network, you can export it to Simulink® or generate MATLAB code for building networks.

Block

You can also work with PyTorch networks by using the PyTorch Model Predict block. This block additionally allows you to load Python functions to preprocess and postprocess data, and to configure input and output ports interactively.