getModel

Description

Examples

Modify Deep Neural Networks in Reinforcement Learning Agent

Create an environment with a continuous action space and obtain its observation and action specifications. For this example, load the environment used in the example Compare DDPG Agent to LQR Controller.

Load the predefined environment.

env = rlPredefinedEnv("DoubleIntegrator-Continuous");Obtain observation and action specifications.

obsInfo = getObservationInfo(env); actInfo = getActionInfo(env);

Create a PPO agent from the environment observation and action specifications. This agent uses default deep neural networks for its actor and critic.

agent = rlPPOAgent(obsInfo,actInfo);

To modify the deep neural networks within a reinforcement learning agent, you must first extract the actor and critic function approximators.

actor = getActor(agent); critic = getCritic(agent);

Extract the deep neural networks from both the actor and critic function approximators.

actorNet = getModel(actor); criticNet = getModel(critic);

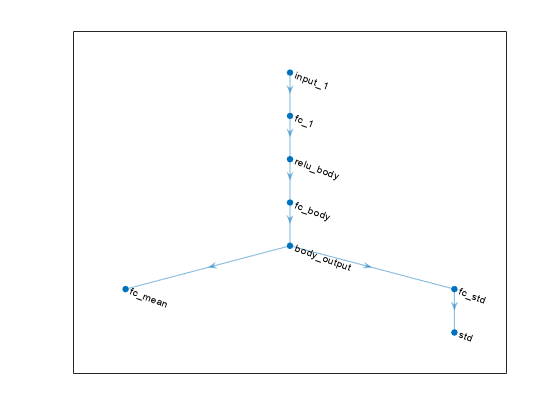

Plot the actor network.

plot(actorNet)

To validate a network, use analyzeNetwork. For example, validate the critic network.

analyzeNetwork(criticNet)

You can modify the actor and critic networks and save them back to the agent. To modify the networks, you can use the Deep Network Designer app. To open the app for each network, use the following commands.

deepNetworkDesigner(criticNet) deepNetworkDesigner(actorNet)

In Deep Network Designer, modify the networks. For example, you can add additional layers to your network. When you modify the networks, do not change the input and output layers of the networks returned by getModel. For more information on building networks, see Build Networks with Deep Network Designer.

To validate the modified network in Deep Network Designer, you must click on Analyze, under the Analysis section. To export the modified network structures to the MATLAB® workspace, generate code for creating the new networks and run this code from the command line. Do not use the exporting option in Deep Network Designer. For an example that shows how to generate and run code, see Create DQN Agent Using Deep Network Designer and Train Using Image Observations.

For this example, the code for creating the modified actor and critic networks is in the createModifiedNetworks helper script.

createModifiedNetworks

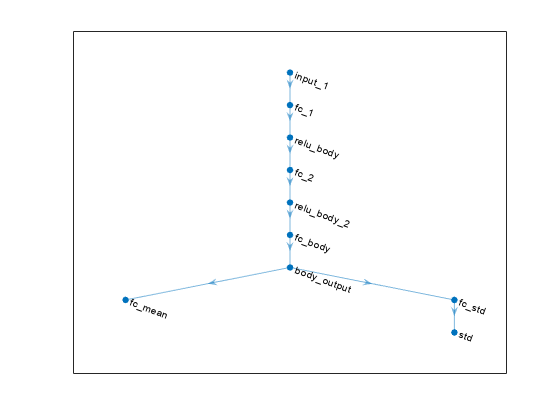

Each of the modified networks includes an additional fullyConnectedLayer and reluLayer in their main common path. Plot the modified actor network.

plot(modifiedActorNet)

After exporting the networks, insert the networks into the actor and critic function approximators.

actor = setModel(actor,modifiedActorNet); critic = setModel(critic,modifiedCriticNet);

Finally, insert the modified actor and critic function approximators into the actor and critic objects.

agent = setActor(agent,actor); agent = setCritic(agent,critic);

Input Arguments

fcnAppx — Function approximator

function approximator object

Function approximator, specified as one of the following:

rlValueFunctionobject — Value function criticrlQValueFunctionobject — Q-value function criticrlVectorQValueFunctionobject — Multi-output Q-value function critic with a discrete action spacerlContinuousDeterministicActorobject — Deterministic policy actor with a continuous action spacerlDiscreteCategoricalActor— Stochastic policy actor with a discrete action spacerlContinuousGaussianActorobject — Stochastic policy actor with a continuous action spacerlContinuousDeterministicTransitionFunctionobject — Continuous deterministic transition function for a model-based agentrlContinuousGaussianTransitionFunctionobject — Continuous Gaussian transition function for a model-based agentrlContinuousDeterministicRewardFunctionobject — Continuous deterministic reward function for a model-based agentrlContinuousGaussianRewardFunctionobject — Continuous Gaussian reward function for a model-based agent.rlIsDoneFunctionobject — Is-done function for a model-based agent

To create an actor or critic function object, use one of the following methods.

Note

For agents with more than one critic, such as TD3 and SAC agents, you must call

getModel for each critic representation individually. You

cannot call getModel for the array returned by

getCritic.

critics = getCritic(myTD3Agent); criticNet1 = getModel(critics(1)); criticNet2 = getModel(critics(2));

Output Arguments

model — Function approximation model

dlnetwork object | rlTable object | 1-by-2 cell array

Version History

Introduced in R2020bR2022a: getModel now uses approximator objects instead of representation objects

Using representation objects to create actors and critics for reinforcement learning

agents is no longer recommended. Therefore, getModel now uses function

approximator objects instead.

R2021b: getModel returns a dlnetwork object

Starting from R2021b, built-in agents use dlnetwork objects as actor and critic representations, so getModel returns a

dlnetwork object.

Due to numerical differences in the network calculations, previously trained agents might behave differently. If this happens, you can retrain your agents.

To use Deep Learning Toolbox™ functions that do not support

dlnetwork, you must convert the network tolayerGraph. For example, to usedeepNetworkDesigner, replacedeepNetworkDesigner(network)withdeepNetworkDesigner(layerGraph(network)).

See Also

Functions

setModel|getActor|setActor|getCritic|setCritic|getNormalizer|setNormalizer|getLearnableParameters|setLearnableParameters

Objects

dlnetwork|rlValueFunction|rlQValueFunction|rlVectorQValueFunction|rlContinuousDeterministicActor|rlDiscreteCategoricalActor|rlContinuousGaussianActor|rlContinuousDeterministicTransitionFunction|rlContinuousGaussianTransitionFunction|rlContinuousDeterministicRewardFunction|rlContinuousGaussianRewardFunction|rlIsDoneFunction

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list:

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

Americas

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)