Get Started with Sensor Fusion and Tracking Toolbox

Sensor Fusion and Tracking Toolbox™ includes tools for designing, simulating, validating, and deploying systems that fuse data from multiple sensors to maintain situational awareness and localization. Reference examples provide a starting point for multi-object tracking and sensor fusion development for surveillance and autonomous systems, including airborne, spaceborne, ground-based, shipborne, and underwater systems.

You can fuse data from real-world sensors such as active and passive radar, sonar, lidar, EO/IR, IMU, and GPS. To further test your tracking algorithms, you can use the simulation environment and sensor models. The toolbox also includes multi-object trackers and estimation filters for evaluating and validating various fusion architectures using track performance metrics such as OSPA and GOSPA.

For simulation acceleration, rapid prototyping, or deployment the toolbox supports C/C++ code generation.

Tutorials

- Orientation, Position, and Coordinate Convention

Learn about toolbox conventions for spatial representation and coordinate systems. - Tracking Simulation Overview

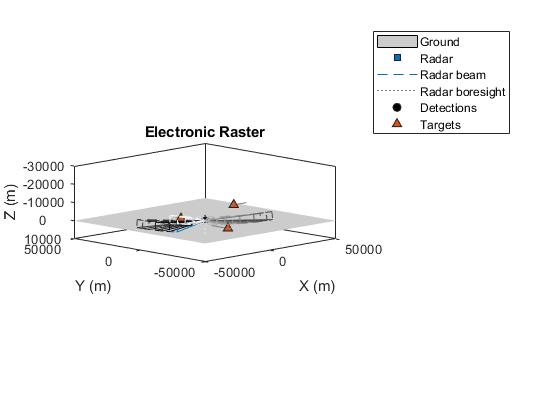

You can build a complete tracking simulation using the functions and objects supplied in this toolbox. - Simulate Radar Detections

Simulate target detections by radar sensors. - Model IMU, GPS, and INS/GPS

Model combinations of inertial sensors and GPS. - Introduction to Estimation Filters

General review of estimation filters provided in the toolbox. - Introduction to Multiple Target Tracking

Introduction to assignment-based multiple target trackers. - Introduction to Tracking Metrics

While designing a multi-object tracking system, it is essential to devise a method to evaluate its performance against the available ground truth. - Use theaterPlot to Visualize Tracking Scenario

This example shows how to use thetheaterPlotobject to visualize various aspects of a tracking scenario.

Toolbox Conventions

Tracking Scenario and Sensors

Inertial Sensor Fusion

Estimation Filters

Multi-Object Tracking

Metrics and Visualization

Related Information

Featured Examples

Videos

Part 1: What is Sensor Fusion?

An overview of what sensor fusion is and how it helps in the

design of autonomous systems.

Part 2: Fusing Mag, Accel, and Gyro to Estimate Orientation

Use magnetometer, accelerometer, and gyro to estimate an object’s

orientation.

Part 3: Fusing GPS and IMU to Estimate Pose

Use GPS and an IMU to estimate an object’s orientation and

position.

Part 4: Tracking a Single Object With an IMM Filter

Track a single object by estimating state with an interacting

multiple model filter.

Part 5: How to Track Multiple Objects at Once?

Introduce two common problems in multi object tracking: Data

association and track maintenance.

Part 6: What is Track-Level Fusion?

Introduce track-to-track fusion and tracking architecture.