Developing a Teleoperated Surgical Robot for Minimally Invasive Single-Port Procedures

By Christian Hatzfeld and Johannes Bilz, Technische Universität Darmstadt

Compared with traditional open surgery, minimally invasive surgery (MIS) conducted via small incisions (or ports) results in less tissue trauma and thus faster recovery, less pain, and less scar tissue. Single-port operations reduce trauma even more. In single-port operations, a surgeon inserts a thin-walled tube into one small incision and performs the procedure using laparoscopic instruments located inside the tube. These operations can also be conducted via natural orifices, such as the navel, throat, or anus, eliminating the need for any incision at all.

Conventional single-port approaches are not without drawbacks. For example, they force the surgeon to operate within a tightly confined workspace using rigid instruments that limit dexterity. These limitations lead to frequent repositioning of the instruments and to instrument collisions.

To address these challenges, our research group at Technische Universität Darmstadt developed FLEXMIN, a teleoperated surgical robot for performing single-port surgeries via natural orifices (Figure 1). We developed the control software for FLEXMIN using Model-Based Design. This approach made it possible for us to model the robot’s kinematics, design a control system for its 20 motors, and generate control code for a real-time target, all from one environment.

The FLEXMIN Hardware Architecture

The FLEXMIN system consists of two hardware subsystems: a haptic interface and an intracorporal robot. The real-time control system interprets the surgeon’s movements at the haptic interface and translates them into motor commands that produce movements at the robot’s end effector—for example, a grasper, needle holder, or other instrument—inside the abdominal cavity.

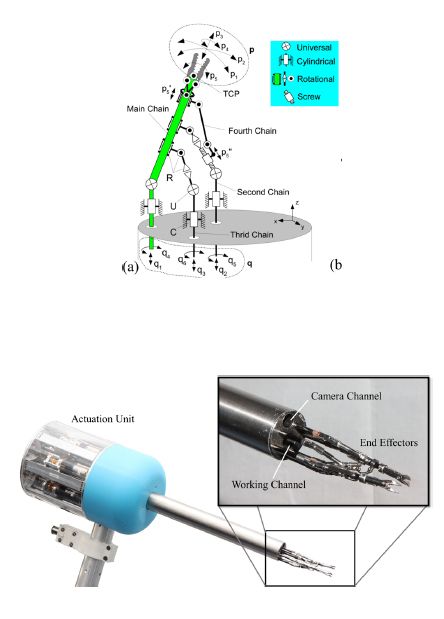

A 40 mm-diameter tube houses the intracorporal robot's two arms and an endoscopic camera that enables the surgeon to see the end effector at the end of each arm. The arms are actuated with a linked tripod structure that we designed in MATLAB®. Motors are used to move three parallel rods within this kinematic structure to accurately position the tool center point (TCP) (Figure 2). Each rod is driven by two brushless DC motors, one for translational movement and the other for rotational movement. The 12 motors are housed in an actuation unit fixed to the tube and connected via an EtherCAT link to the system’s real-time computers.

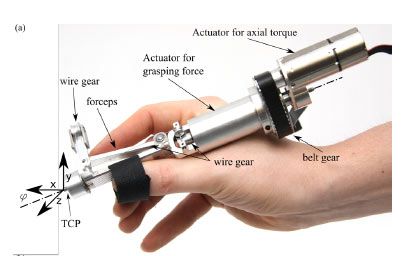

The FLEXMIN haptic interface is manipulated directly by the surgeon. Its structure approximates the tripod structure used in the intracorporal robot (Figure 3). Force feedback for grasping and axial torque is generated using two brushless DC servomotors, while three additional motors provide haptic feedback for moving the TCP in three dimensions. Commanded TCP coordinates are measured using rotational encoders on the motors. Like the components in the intracorporal robot, the encoders and motors in the haptic interface are linked to real-time target computers via the EtherCAT network.

Designing and Implementing the Real-Time Controller

One of our first control design challenges was to translate the movements of the haptic interface in three dimensions into corresponding movements of the TCP. We accomplished this using two MATLAB scripts. The first uses data from motor encoders in the haptic interface to calculate the desired position of the TCP in Cartesian space. The second uses this position for the TCP to calculate the corresponding positions of the three rods in the arm as well as the motor commands needed to set those positions.

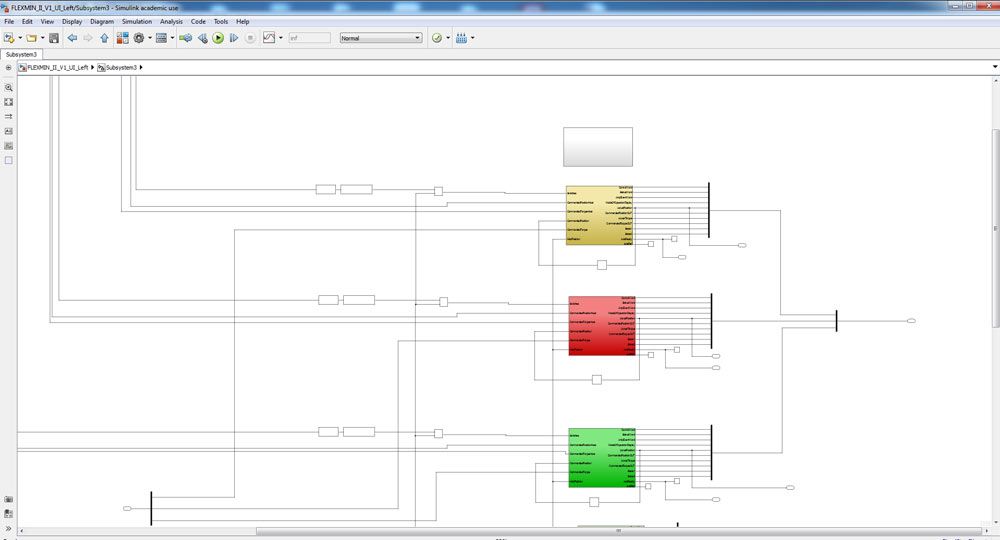

Our Simulink® controller model incorporates these MATLAB scripts and includes EtherCAT blocks to send data to and receive data from the robot’s motors and sensors via the EtherCAT bus. The model also includes an extensive state chart modeled with Stateflow®. We use the state chart to initialize the motor controllers and manage the state of the entire FLEXMIN system.

To implement the haptic feedback, we measure the interaction force at the robot’s grasper, using six sensors on the actuation unit. After applying a bandpass filter to this measurement data, we use it to calculate the force applied to the three rods in the arm. We perform additional kinematic calculations to determine the TCP force based on the position of the rods. These calculations enable us to determine the actual force being applied at the grasper—for example, when a surgeon grasps tissue and begins to pull it. We developed a Simulink model that uses this force measurement information to control the motors of the haptic interface and provide the surgeon with haptic feedback of forces up to 15 newtons updated up to 40 times per second (Figure 4).

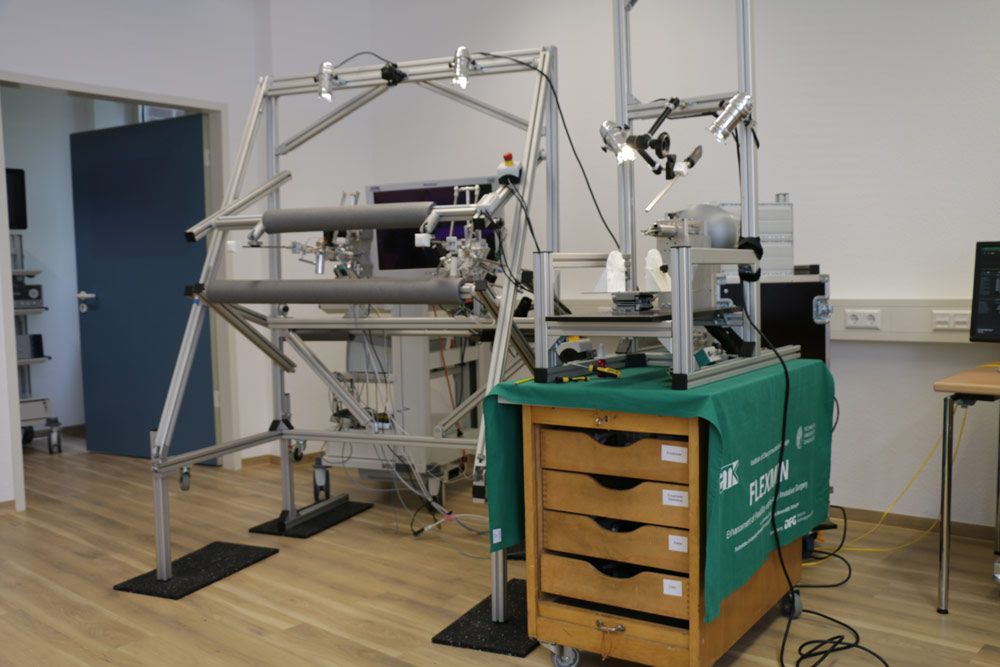

When we were ready for hardware testing, we generated C code from our models using MATLAB Coder™ and Simulink Coder™, and used Simulink Real-Time™ to run the code on two real-time PCs (one for each arm), each equipped with 3 GHz Intel® Core™ 2 Duo processors. This setup enabled us to test, debug, and refine the real-time performance of the intracorporal robot and haptic feedback interface in the lab (Figure 5).

In addition to using the lab setup for development, we also use it in a standalone mode in which the computers boot up with the most recent stable release of our software, so that we are able to demonstrate our system to interested researchers. This is a handy feature that enables us to show our work with minimal preparation time.

Hands-On Surgical Tests and Next Steps

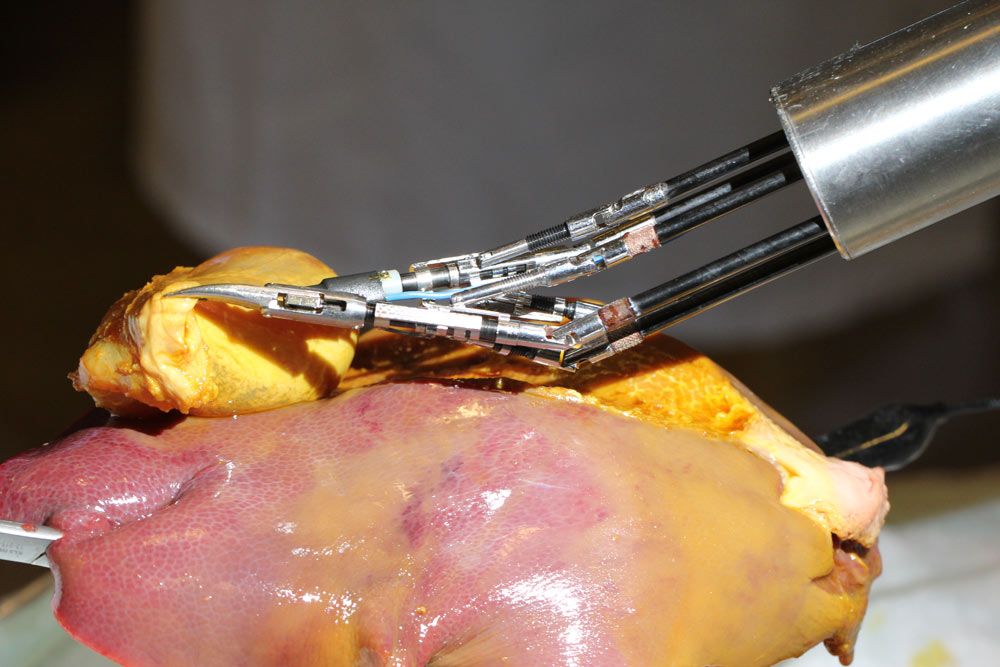

We conducted a number of hands-on test with surgeons and students from the University Hospital Tübingen. In addition to basic suturing tests, participants evaluated the dexterity and usability of FLEXMIN in performing a gall bladder resection in a porcine model (Figure 6). Participants reported that they were impressed by the responsiveness of the system and that they noticed no lag between their hand movements and the movement of the instruments. They also reported being impressed with the intuitiveness of the system and the amount of room it afforded for performing surgical maneuvers within the abdominal cavity.

In future versions of FLEXMIN, we plan to incorporate preprogrammed movements—for example, the ability to autonomously pass a needle through two marked locations—as well haptic feedback on grasping pressure. These improvements could be implemented by our colleagues or even by new students who join the group. One of the great advantages of using MATLAB and Simulink in our research is that new team members can come up to speed quickly on the project. Virtually all undergraduate and graduate students at TU Darmstadt have experience with MATLAB and Simulink from their coursework. Further, the modular approach we took with our models enables group members to work independently on their respective modules and then assemble the modules into a complete system. Taken together, these factors make it easy for us to collaborate as a group and even pass the project on to others.

Published 2018