Maze Solver (Reinforcement Learning)

Refer to 4.1, Reinforcement learning: An introduction, RS Sutton, AG Barto , MIT press

Value Iterations:

Algorithms of dynamic programming to solve finite MDPs. Policy evaluation refers to the (typically) iterative computation of the value functions for a given policy. Policy improvement refers to the computation of an improved policy given the value function for that policy. Putting these two computations together, we obtain policy iteration and value iteration, the two most popular DP methods. Either of these can be used to reliably compute optimal policies and value functions for finite MDPs given complete knowledge of the MDP.

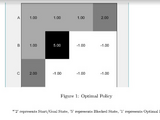

◮ Problem: find optimal policy π

◮ Solution: iterative application of Bellman optimality backup

◮ v1 → v2 → ... → v∗

◮ Using synchronous backups, At each iteration k + 1 For all states s ∈ S : Update v_{k+1}(s) from v_{k}(s')

◮ Convergence to v∗ will be proven later

◮ Unlike policy iteration, there is no explicit policy

◮ Intermediate value functions may not correspond to any policy

Cite As

Bhartendu (2024). Maze Solver (Reinforcement Learning) (https://www.mathworks.com/matlabcentral/fileexchange/63062-maze-solver-reinforcement-learning), MATLAB Central File Exchange. Retrieved .

MATLAB Release Compatibility

Platform Compatibility

Windows macOS LinuxCategories

Tags

Community Treasure Hunt

Find the treasures in MATLAB Central and discover how the community can help you!

Start Hunting!Discover Live Editor

Create scripts with code, output, and formatted text in a single executable document.

| Version | Published | Release Notes | |

|---|---|---|---|

| 1.0.0.0 |