Explore Status and Quality of Testing Activities Using Model Testing Dashboard

The Model Testing Dashboard collects metric data from the model design and testing artifacts in a project to help you assess the status and quality of your requirements-based model testing.

The dashboard analyzes the artifacts in a project, such as requirements, models, and test results. Each metric in the dashboard measures a different aspect of the quality of the testing of your model and reflects guidelines in industry-recognized software development standards, such as ISO 26262 and DO-178C.

This example shows how to assess the testing status of a unit by using the Model Testing Dashboard. If the requirements, models, or tests in your project change, use the dashboard to assess the impact on testing and update the artifacts to achieve your testing goals. Collecting results for each of the metrics requires licenses for Simulink Check™, Requirements Toolbox™, Simulink Coverage™, and Simulink Test™. If metric results have been collected, viewing the results requires only a Simulink Check license. For more information, see Model Testing Metrics.

Explore Testing Artifacts and Metrics for Project

1. Open the project that contains the models and testing artifacts. For this example, in the MATLAB® Command Window, enter:

openProject("cc_CruiseControl");2. On the Project tab, click Model Testing Dashboard to open the Dashboard window.

3. Open the Model Testing Dashboard by clicking Model Testing in the dashboard gallery in the toolstrip.

The first time that you open the dashboard for a project, the dashboard identifies the artifacts in the project and collects traceability information. The Project panel displays artifacts from the current project that are compatible with the current dashboard.

4. Collect and view the metric results for the unit cc_DriverSwRequest by selecting cc_DriverSwRequest in the Project panel. The dashboard displays metric results for the unit that you select in the Project panel.

When you initially select a unit in the Project panel, the dashboard automatically collects the metric results for the unit. To collect metrics for each of the units in the project, click Collect > Collect All. If you previously collected metric data for a unit, the dashboard populates with the existing data.

View Traceability of Design and Testing Artifacts

The Artifacts panel shows artifacts that trace to the unit. Expand the folders and subfolders to see the artifacts in the project that trace to the unit selected in the Project panel. To view more information for a folder or subfolder, click the three dots and click the Help icon ![]() , if available.

, if available.

The traced artifacts include:

Functional Requirements — Requirements of Type

Functionalthat are either implemented by or upstream of the unit. Use the Requirements Toolbox to create or import the requirements in a requirements file (.slreqx).Design artifacts — The model file that contains the unit that you test and the libraries, data dictionaries, and other design artifacts that the model uses.

Tests — Tests and test harnesses that trace to the unit. Create the tests by using Simulink Test. A test can be either a test iteration in a test case or a test case without iterations.

Test Results — Results of the tests for the unit. The dashboard shows the latest results from the tests.

An artifact appears under the folder Trace Issues if there are unexpected requirement links, requirement links which are broken or not supported by the dashboard, or artifacts that the dashboard cannot trace to a unit. The folder includes artifacts that are missing traceability and artifacts that the dashboard is unable to trace. If an artifact generates an error during traceability analysis, it appears under the Errors folder. For more information about artifact tracing issues and errors, see Trace Artifacts to Units and Components.

Navigate to the requirement artifact for Cancel Switch Detection. Expand cc_DriverSwRequest > Functional Requirements > Implemented > cc_SoftwareReqs.slreqx and select the requirement Cancel Switch Detection. To view the path from the project root to the artifact, click the three dots to the right of the artifact name. You can use the menu button ![]() to the right of the search bar to collapse or expand the artifact list, or to restore the default view of the artifacts list.

to the right of the search bar to collapse or expand the artifact list, or to restore the default view of the artifacts list.

For more information on how the dashboard traces the artifacts shown in the Artifacts panel, see Manage Project Artifacts for Analysis in Dashboard.

View Metric Results for Unit

You can collect and view model testing metric results for each unit that appears in the Project panel. To view the results for the unit cc_DriverSwRequest, in the Project panel, click cc_DriverSwRequest. When you click on a unit, the dashboard shows the Model Testing information for that unit. The top of the dashboard tab shows the name of the unit, the data collection timestamp, and the user name that collected the data.

If you collect results and then make a change to an artifact in the project, the dashboard warns you to refresh the dashboard and shows a Stale icon ![]() on widgets that might show outdated data.

on widgets that might show outdated data.

![]()

When you see the warning banner, refresh the dashboard by clicking the Collect button. For the unit in this example, the metric results in the dashboard are not stale.

The dashboard widgets summarize the metric data results and show testing issues you can address, such as:

Missing traceability between requirements and tests

Tests or requirements with a disproportionate number of links between requirements and tests

Failed or disabled tests

Missing model coverage

You can use the overlays in the Model Testing Dashboard to see if the metric results for a widget are compliant, non-compliant, or generate a warning that the metric results should be reviewed. Results are compliant if they show full traceability, test completion, or model coverage. The overlay appears on the widgets that have results in that category. You can see the total number of widgets in each compliance category in the top-right corner of the dashboard.

To see the compliance thresholds for a metric, point to the overlay icon.

You can control which types of compliance overlays appear in the dashboard by selecting a threshold setting from the top-left corner of the dashboard.

The threshold settings allow you to choose between:

No Thresholds - The dashboard does not show compliance overlays.

Testing without Requirements - The dashboard only shows compliance overlays for testing metrics

Requirements-Based Testing - The dashboard shows compliance overlays for both requirements metrics and testing metrics.

By default, the dashboard shows compliance overlays for both requirements metrics and testing metrics. You can also hide overlay icons by clicking a selected category in the Overlays section of the toolstrip. Widgets appear as compliant or non-compliant depending on the compliance thresholds for the underlying metric. For more information on the compliance thresholds for each metric, see Model Testing Metrics.

To explore the data in more detail, click an individual metric widget to open the Metric Details. For the selected metric, a table displays a metric value for each artifact. The table provides hyperlinks to open the artifacts so that you can get detailed results and fix the artifacts that have issues. When exploring the tables, note that you can sort and filter results by using the options in the column header.

Evaluate Testing and Traceability of Requirements

A standard measure of testing quality is the traceability between individual requirements and the tests that verify them. To assess the traceability of your tests and requirements, use the metric data in the Test Analysis section of the dashboard. You can quickly find issues in the requirements and tests by using the data summarized in the widgets. Click a widget to view a table with detailed results and links to open the artifacts.

Requirements Missing Tests

In the Requirements Linked to Tests section, the Unlinked widget indicates how many requirements are missing links to tests. To address unlinked requirements, create tests that verify each requirement and link those tests to the requirement. The Requirements with Tests gauge widget shows the linking progress as the percentage of requirements that have tests.

Click any widget in the section to see the detailed results in the Requirement linked to tests table. For each requirement artifact, the table shows the source file that contains the requirement and whether the requirement is linked to at least one test. When you click the Unlinked widget, the table is filtered to show only requirements that are missing links to tests.

Requirements with Disproportionate Numbers of Tests

The Tests per Requirement section summarizes the distribution of the number tests linked to each requirement. For each value, a colored bin indicates the number of requirements that are linked to that number of tests. Darker colors indicate more requirements. If a requirement has a too many tests, the requirement might be too broad, and you may want to break it down into multiple more granular requirements and link each of those requirements to the respective tests. If a requirement has too few tests, consider adding more tests and linking them to the requirement.

To see the requirements that have a certain number of tests, click the corresponding number to open a filtered Tests per requirement table. For each requirement artifact, the table shows the source file that contains the requirement and the number of linked tests. To see the results for each of the requirements, in the Linked Tests column, click the filter icon, then select Clear Filters.

Tests Missing Requirements

In the Tests Linked to Requirements section, the Unlinked widget indicates how many tests are not linked to requirements. To address unlinked tests, add links from these tests to the requirements they verify. The Tests with Requirements gauge widget shows the linking progress as the percentage of tests that link to requirements.

Click any widget in the section to see detailed results in the Tests linked to requirements table. For each test artifact, the table shows the source file that contains the test and whether the test is linked to at least one requirement. When you click the Unlinked widget, the table is filtered to show only tests that are missing links to requirements.

Tests with Disproportionate Numbers of Requirements

The Requirements per Test widget summarizes the distribution of the number of requirements linked to each test. For each value, a colored bin indicates the number of requirements that are linked to that number of tests. Darker colors indicate more tests. If a test has too many or too few requirements, it might be more difficult to investigate failures for that test, and you may want to change the test or requirements so that they are easier to track. For example, if a test verifies many more requirements than the other tests, consider breaking it down into multiple smaller tests and linking them to the requirements.

To see the tests that have a certain number of requirements, click the corresponding bin to open the Requirements per test table. For each test artifact, the table shows the source file that contains the test and the number of linked requirements. To see the results for each of the tests, in the Linked Requirements column, click the filter icon, then select Clear Filters.

Disproportionate Number of Tests of One Type

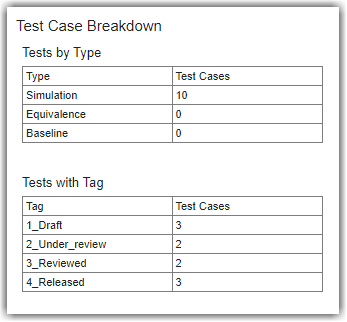

Use the Test Breakdown to decide if you want to add tests of a certain type, or with a certain tag, to your project.

The Component widget shows how many tests contribute to metric results for the unit. To see which tests are component tests, click the Component widget.

The Independent widget shows how many tests do not contribute to the metric results. To see which tests are independent tests, click the Independent widget. Independent tests can execute independently of the component and can behave differently depending on the conditions specified in the test harness. Therefore, these tests do not definitively indicate the quality of component testing and do not contribute to the other metric results in the Model Testing Dashboard. Independent tests include tests on libraries, subsystem references, and virtual subsystems.

The Test Cases by Type and Test Cases with Tags widgets show how many tests the unit has of each type and with each custom tag. In industry standards, tests are often categorized as normal tests or robustness tests. You can tag test cases with Normal or Robustness and see the total count for each tag by using the Test Cases with Tag widget. To see the test cases of one type, click the corresponding row in the Test Cases by Type table to open the Test case type table. For each test case artifact, the table shows the source file that contains the test and the test type. To see results for each of the test cases, in the Type column, click the filter icon, then select Clear Filters. To see the test cases that have a tag, click the corresponding row in the Test Cases with Tag table to open the Test case tags table. For each test case artifact, the table shows the source file that contains the test and the tags on the test case. To see results for each of the test cases, in the Tags column, click the filter icon, then select Clear Filters.

Analyze Test Results and Coverage

To see a summary of the test results and coverage measurements, use the widgets in the Simulation Test Result Analysis section of the dashboard. Find issues in the tests and in the model by using the test result metrics. Find coverage gaps by using the coverage metrics and add tests to address missing coverage.

Run the tests for the model and collect the dashboard metrics to check for model testing issues.

Tests That Have Not Passed

In the Model Test Status section:

The Failed widget indicates how many tests failed. Click on the Failed widget to view a table of the tests that failed. Click the hyperlink for each failed test artifact to open it in the Test Manager and investigate the artifacts that caused the failure. Fix the artifacts, re-run the tests, and export the results.

The Untested widget indicates how many tests do not have pass/fail criteria such as verify statements, custom criteria, baseline criteria, and logical or temporal assessments. If a test does not contain pass/fail criteria, then it does not verify the functionality of the linked requirement. Add one or more of these pass/fail criteria to your tests to help verify the functionality of your model.

The Not Run and Disabled widgets indicate how many tests for the unit have not run.

You can click the widgets to view a filtered table of metric results with hyperlinks to the source files for the tests and results. The dashboard analyzes only the latest test result that it traces to each test.

Missing Coverage

The Model Coverage subsection shows whether there are model elements that are not covered by the tests. If one of the coverage types shows less than 100% coverage, you can click the dashboard widgets to investigate the coverage gaps. Add tests to cover the gaps or justify points that do not need to be covered. Then run the tests again and export the results. For more information on coverage justification, see Fix Requirements-Based Testing Issues.

To see the detailed results for one type of coverage, click the corresponding bar. For the model and test artifacts, the table shows the source file and the achieved and justified coverage.

Sources of Overall Achieved Coverage

The Achieved Coverage Ratio subsection shows the sources of the overall achieved coverage:

The Requirements-Based Tests section identifies the percentage of the overall achieved coverage coming from requirements-based tests. Requirements-based tests are tests that link to at least one requirement in the design. When you link a test to a requirement, you provide traceability between the test and the requirement that the test verifies. If you do not link a test to a requirement, it is not clear which aspect of the design the test verifies. If one of the coverage types shows that less than 100% of the overall achieved coverage comes from requirements-based tests, add links from the associated tests to the requirements they verify.

The Unit-Boundary Tests section identifies the percentage of the overall achieved coverage coming from unit-boundary tests. Unit-boundary tests are tests that test the whole unit. When you execute a test on the unit-boundary, the test has access to the entire context of the unit design. If you only test the lower-level, sub-elements in a design, the test might not capture the actual functionality of those sub-elements within the context of the whole unit. Types of sub-elements include subsystems, subsystem references, library subsystems, and model references. If one of the coverage types shows that less than 100% of the overall achieved coverage comes from unit-boundary tests, consider either adding a test that tests the whole unit or reconsidering the unit-model definition.

Industry-recognized software development standards recommend using requirements-based, unit-boundary tests to confirm the completeness of coverage. For more information, see Monitor Low-Level Test Results in the Model Testing Dashboard.