Exploit Cointegrating Relationships to Impute Missing Data

This example shows how to impute missing observations in a time series by exploiting a cointegrating relationship between the series and at least one other fully observed series. The missing observations are assumed to be missing completely at random, as opposed to missing due to some systematic reason (for example, data disaggregation). The example uses short-, medium-, and long-term Canadian interest rate series to illustrate the procedure.

Typically, economic and financial measurements are sampled at regular intervals, and time series models assume regular series. When an input data set is not timestamped (matrices and tables), some Econometrics Toolbox™ functions remove entire rows from the input that contain at least one missing value before operating on the data. This list-wise deletion might result in an irregular time series. When you provide timestamped data, such as in timetables, those functions issue an error when the data contains missing values. Therefore, regardless of whether you provide timestamped data, you must clean each series of missing data and ensure the resulting set is regular before proceeding with analyses.

MATLAB® has several functions and applications to help you address missing values, such as the Clean Missing Data task. This functionality applies a variety of techniques, from zero-order hold through moving-mean or kNN interpolation. The supported imputation methods assume a spatial relationship among neighboring observations. These methods do not inform missing values from observations of related variables based on a known model of the variables. However, you can use MATLAB® to apply model-based or machine-learning imputation methods, such as fitting linear regression models or using decision trees with surrogate splitting. For financial applications, see Credit Scorecard Modeling with Missing Values.

For nonstationary time series data, linear regression models can result in spurious relationships among variables. Therefore, their use for addressing missing data in such series is limited. In some applications, for example, stock portfolio analysis, nonstationary series exhibit cointegration—the series change similarly, or a stationary linear combination of the series exists (for details, see Cointegration and Error Correction Analysis). If at least one such linear combination exists, you can build and estimate a vector error-correction (VEC) model over a fully observed, contiguous portion of the sample, and then forecast the estimated model into a horizon containing the missing values. Econometrics Toolbox VEC modeling tools use a two-step procedure to estimate VEC models, which includes maximum likelihood, and they forecast missing values using conditional expectations, given observed contemporaneous and past measurements of other variables. Therefore, this imputation method has statistical backing.

The example follows this procedure:

Confirm that each series is integrated.

Partition data into two contiguous sets: a fully observed set for fitting and validating the VEC model, and a set that contains the missing data. The latter set is the forecast horizon.

Partition the fully observed set into two contiguous sets: presample and estimation samples.

Determine cointegration rank.

Specify several candidate VEC models and fit them to the estimation sample.

Compare the models using goodness-of-fit statistics. Choose the optimal model.

Forecast the model into the forecast horizon. Provide the sample containing the missing data to obtain conditional forecasts of the missing observations.

Load Data

Load the Canadian inflation and interest rates data set, Data_Canada.mat. The data contains short-, medium-, and long-term interest rate series measured annually from 1954 through 1994 (last three variables of the data). For more details on the data set, enter Description at the command line.

load Data_Canada

varnames = DataTimeTable.Properties.VariableNames(3:5);

DTT = DataTimeTable(:,varnames);Determine whether any observations are missing.

anymissing(DTT)

ans = logical

0

No observations are missing.

Visually Confirm That Each Series Is Integrated

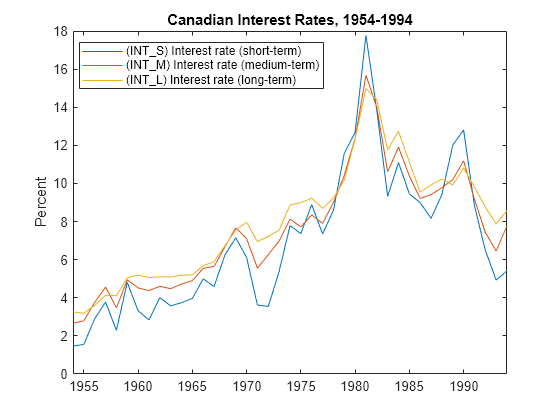

Plot each series.

plot(DTT.Time,DTT.Variables) title("Canadian Interest Rates, 1954-1994") ylabel("Percent") legend(series(3:5),Interpreter="none",Location="best")

Each series exhibits the behavior of a unit root series (they do not appear to jump around a level or deterministic trend). Also, the series appear to move together, suggesting that they are cointegrated (see Cointegration and Error Correction Analysis).

To statistically confirm that each series is integrated, see Unit Root Tests.

Partition Full Data Set

Suppose, arbitrarily, that during years 1986, 1989, 1990, and 1993, the short-term interest rate measurements are missing completely at random.

Partition the data set into a fully observed set, from 1954 through 1985, and a partially observed set, from 1986 through 1994.

DTT.Time.Format = "yyyy"; b = datetime(1986,1,1); % Separation point DTT_FO = DTT(DTT.Time < b,:); % Fully observed sample DTT_PO = DTT(DTT.Time >= b,:); % Partially observed sample numseries = width(DTT); T = height(DTT_FO)

T = 32

horizon = height(DTT_PO);

Enter NaN values for the short-term interest rates that are missing.

missdt = datetime([1986; 1989; 1990; 1993],1,1,Format="yyyy"); DTT_PO{missdt,"INT_S"} = NaN;

Partition Fully Observed Data Set for Model Building

Before partitioning the fully observed data set, you must decide on , where is the largest VEC model degree among all candidate models. Economic theory or domain knowledge usually informs the value of the maximum lag. This example uses . For more information about lag selection, see Time Series Regression IX: Lag Order Selection.

Partition the fully observed data set DTT_FO, which has observations, into these two contiguous data sets:

Presample containing the first observations in the sample. The presample has just enough observations to initialize the VEC model with the largest degree.

Estimation sample containing the next 28 observations.

p = 4; % q = p - 1 = 3 DTT_FO0 = DTT_FO(1:p,:); % Presample DTT_FOEst = DTT_FO((p+1):T,:); % Estimation sample

The imputation method in this example requires a fully observed training sample because VEC model estimation requires data with a regular timebase. In other words, if you try to estimate a VEC model with regular data containing missing observations, MATLAB takes one of these actions:

For input data in a matrix or table, MATLAB removes all observations (rows) containing missing data. This action can create an irregular series.

For input data in a timetable, MATLAB issues an error.

Determine Cointegration Rank

The cointegration rank is the number of independent linear combinations of the series that form a single stationary series. The error-correction term in the equation of a VEC model forms these linear combinations; the rank of its coefficient (called the impact matrix) is the cointegration rank . You must choose the rank when you build a VEC model.

Econometrics Toolbox has two tests to help determine the cointegration rank of a multivariate series: the Engle-Granger cointegration test (egcitest) and the Johansen cointegration test (jcitest). This example uses jcitest.

The Johansen cointegration test, and VEC modeling in general, require you to specify the Johansen form of the VEC model deterministic terms. The H1 form (the default) includes intercepts in the cointegration relation and the first differences of the response variables, the latter of which implies a linear trend in the levels of the response variables. A nonzero intercept in the cointegrating relations is usually appropriate because it implies the long-run average is nonzero. The plot of the series exhibits a linear time trend. Therefore, this example uses the H1 form.

Apply the Johansen cointegration test to the presample and estimation sample data. Conduct the test for each lagged difference in the model .

q = p - 1; numlags = 1:q; JCITbl = jcitest([DTT_FO0; DTT_FOEst],Lags=numlags)

JCITbl=3×7 table

r0 r1 r2 Model Lags Test Alpha

_____ _____ _____ ______ ____ _________ _____

t1 true false false {'H1'} 1 {'trace'} 0.05

t2 false false false {'H1'} 2 {'trace'} 0.05

t3 true false false {'H1'} 3 {'trace'} 0.05

Variables r0 through r2 in JCITbl specify candidate cointegration ranks for a test, where r0 tests , r1 tests , and so on. For each test (row), take the cointegration rank as the first candidate rank that fails to reject the null hypothesis (table value is false). The results are:

when

Lags=1.when

Lags=2. This result implies that the response series in first-differences is a stable VAR(1) model.when

Lags=3.

Programmatically extract the rank for each tested lag from the jcitest results.

FullJCITbl = [JCITbl{:,1:numseries} zeros(height(JCITbl),1)]; % Account for full-rank case

[rr,r] = find(~FullJCITbl);

[~,idx] = unique(rr);

r = r(idx) - 1;Specify and Estimate Candidate VEC Models

The candidate VEC models are:

VEC(1) with

VEC(2) with

VEC(3) with

For each model:

Create a candidate model template for estimation by using the

vecmfunction.Fit a candidate model to the estimation sample by using the

estimatefunction. Initialize the model by using the presample. Although models of lower lag order require fewer presample observations, you fit all models to the same data and initialize with the same observations so that you can compare the results fairly.Obtain summary statistics from estimation by using the

summarizefunction.Extract Akaike information criterion (AIC) from the summary statistics.

nummdls = numel(numlags); for j = nummdls:-1:1 % Reverse order to preallocate array of vecm objects Mdl = vecm(numseries,r(j),numlags(j)); EstMdl(j) = estimate(Mdl,DTT_FOEst,Presample=DTT_FO0); EstSum = summarize(EstMdl(j)); aic(j) = EstSum.AIC; end

Choose Model with Optimal Goodness-of-Fit

Find the model that minimizes the AIC.

[~,idx] = min(aic); BestMdl = EstMdl(idx)

BestMdl =

vecm with properties:

Description: "3-Dimensional Rank = 1 VEC(3) Model"

SeriesNames: "Y1" "Y2" "Y3"

NumSeries: 3

Rank: 1

P: 4

Constant: [1.50519 1.54011 0.688235]'

Adjustment: [3×1 matrix]

Cointegration: [3×1 matrix]

Impact: [3×3 matrix]

CointegrationConstant: 10.112

CointegrationTrend: 0

ShortRun: {3×3 matrices} at lags [1 2 3]

Trend: [3×1 vector of zeros]

Beta: [3×0 matrix]

Covariance: [3×3 matrix]

The optimal model of the three candidates is the VEC(3) model with .

Forecast Model to Impute Missing Observations

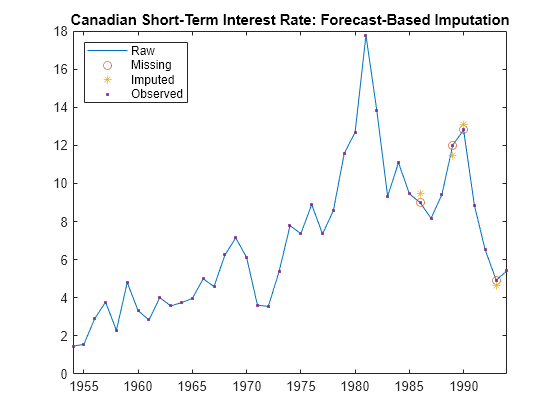

Obtain estimates of the missing values in the held-out, partially observed data by forecasting the best model using the forecast function. Supply the partially observed data by using the InSample argument. Initialize the model for the forecast by providing the estimation sample. Obtain forecasts of the missing observations, conditioned on the observed values, by providing the partially observed sample. Plot the results.

YF = forecast(BestMdl,horizon,DTT_FOEst,InSample=DTT_PO,ResponseVariables=varnames);

missidx = ismember(YF.Time,missdt);

imputeMissing = YF(missdt,"INT_S_Responses");

imputeMissingimputeMissing=4×1 timetable

Time INT_S_Responses

____ _______________

1986 9.4943

1989 11.445

1990 13.071

1993 4.6485

figure plot(DTT.Time,DTT.INT_S) hold on plot(missdt,DTT{missdt,"INT_S"},'o') plot(missdt,imputeMissing.INT_S_Responses,'*') obsidx = ~ismember(DTT.Time,missdt); plot(DTT.Time,DTT.INT_S,'.') title("Canadian Short-Term Interest Rate: Forecast-Based Imputation") legend(["Raw" "Missing" "Imputed" "Observed"],Location="northwest")

The imputations are fairly close to their corresponding observed values. In real applications, a comparison of imputed values and observations is not possible.

Alternatively, you can conduct a Monte Carlo study of the short-term rates from the missing periods by similarly using the simulate function. The mean and percentiles of the sample can serve as imputations and inferences, respectively.